Note

This is the legacy documentation (v1). For the latest version, please visit the current documentation.This section contains the original documentation that is being migrated to a new structure.

This is the multi-page printable view of this section. Click here to print.

This section contains the original documentation that is being migrated to a new structure.

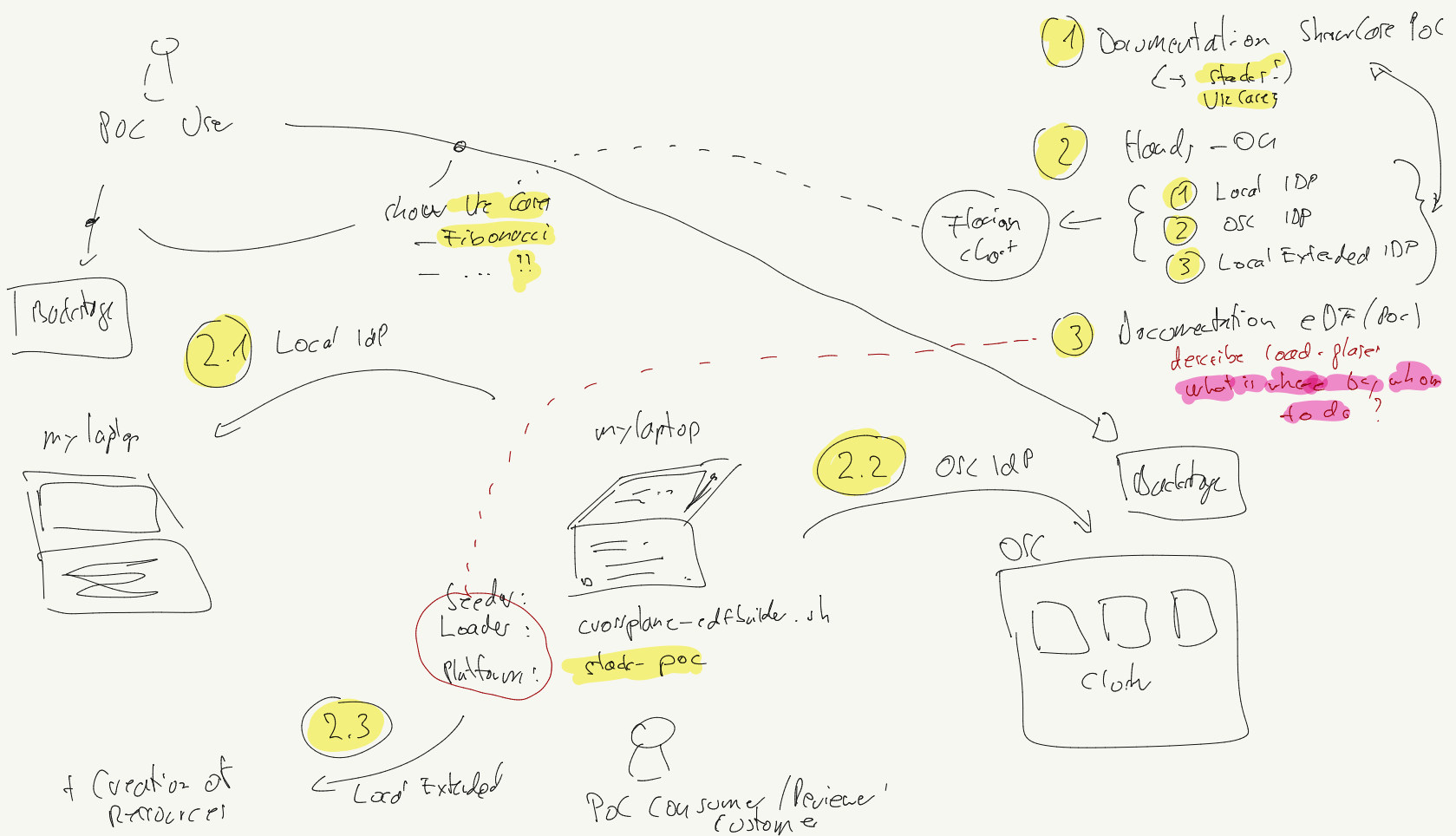

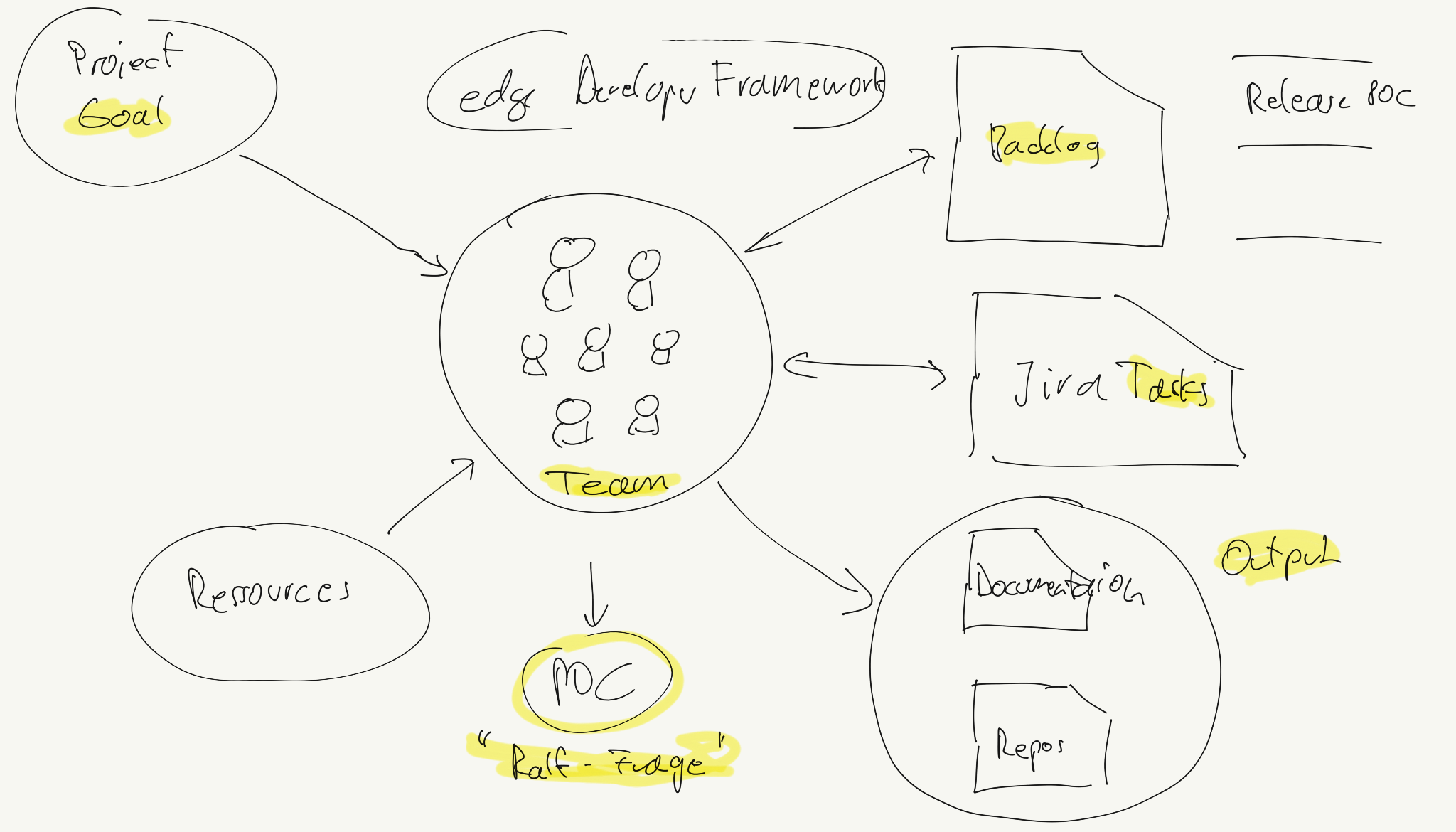

The challenge of IPCEI-CIS Developer Framework is to provide value for DTAG customers, and more specifically: for Developers of DTAG customers.

That’s why we need verifications - or test use cases - for the Developer Framework to develop.

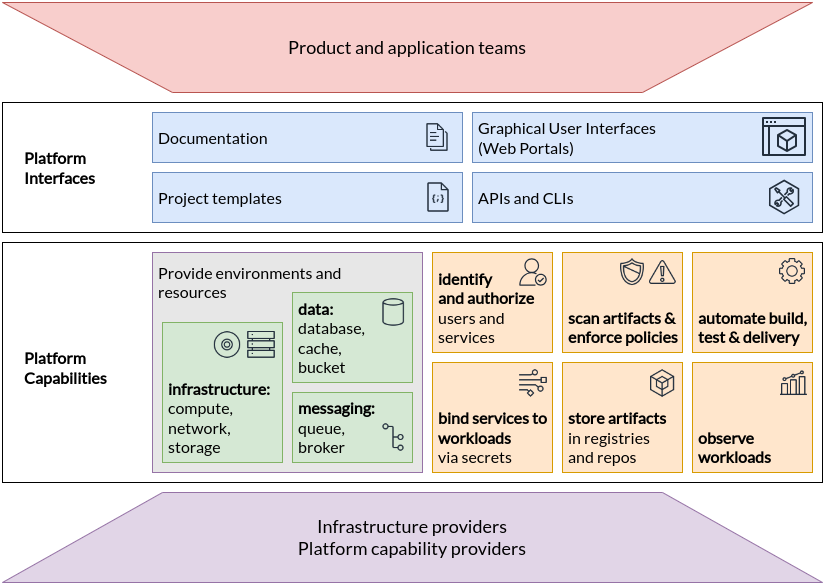

(source: https://tag-app-delivery.cncf.io/whitepapers/platforms/)

(source: https://tag-app-delivery.cncf.io/whitepapers/platforms/)

Here we have a collection of possible usage scenarios.

Deploy and develop the famous socks shops:

See also mkdev fork: https://github.com/mkdev-me/microservices-demo

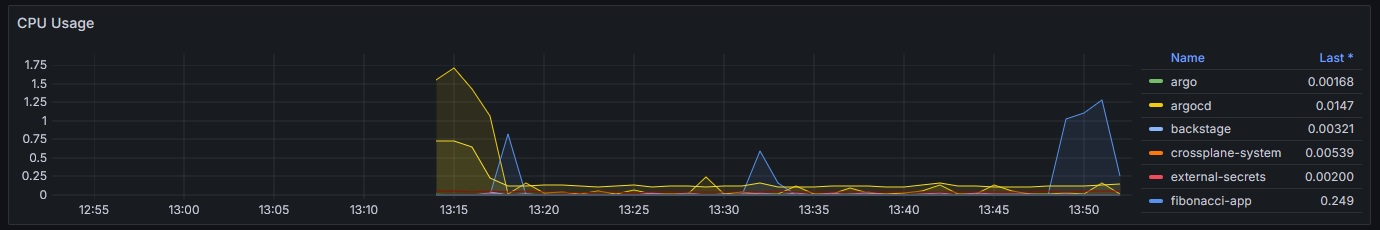

The Fibonacci App on the cluster can be accessed on the path https://cnoe.localtest.me/fibonacci. It can be called for example by using the URL https://cnoe.localtest.me/fibonacci?number=5000000.

The resulting ressource spike can be observed one the Grafana dashboard “Kubernetes / Compute Resources / Cluster”. The resulting visualization should look similar like this:

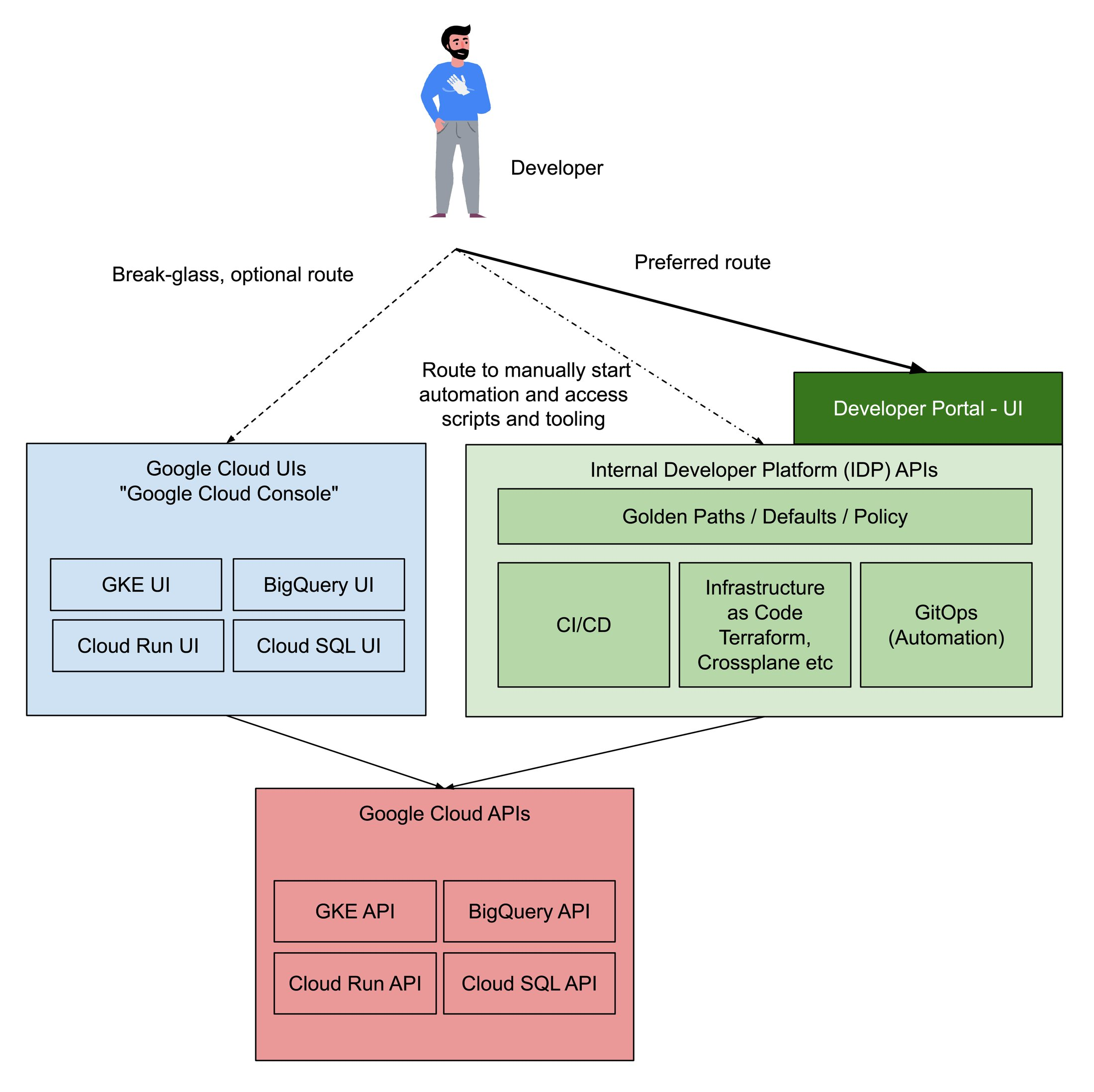

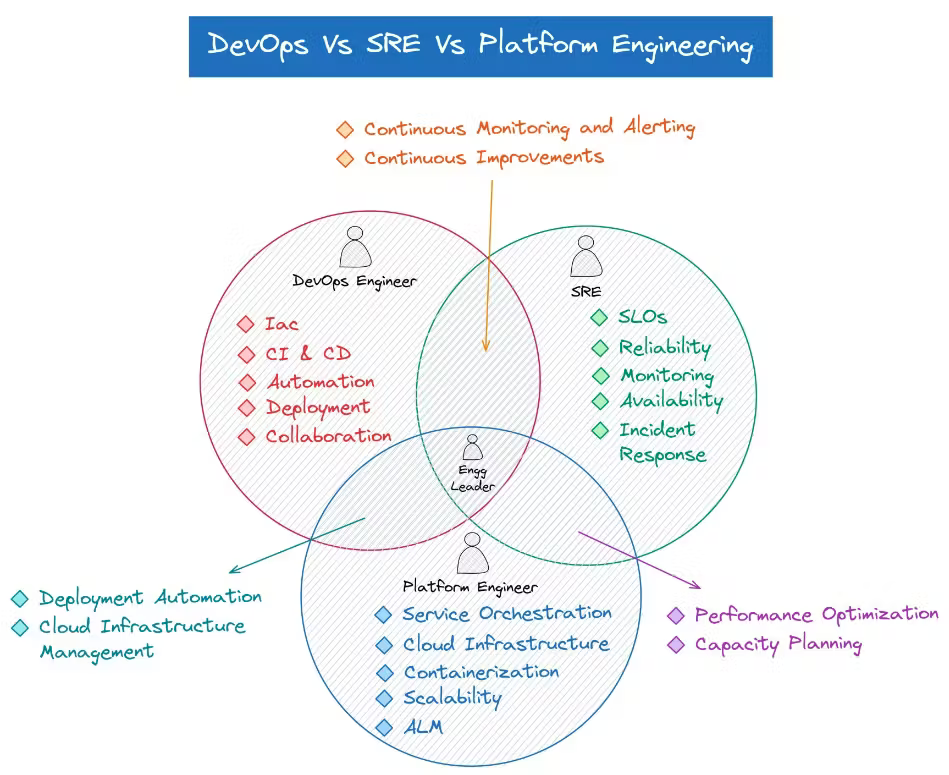

…. taken from https://cloud.google.com/blog/products/application-development/common-myths-about-platform-engineering?hl=en

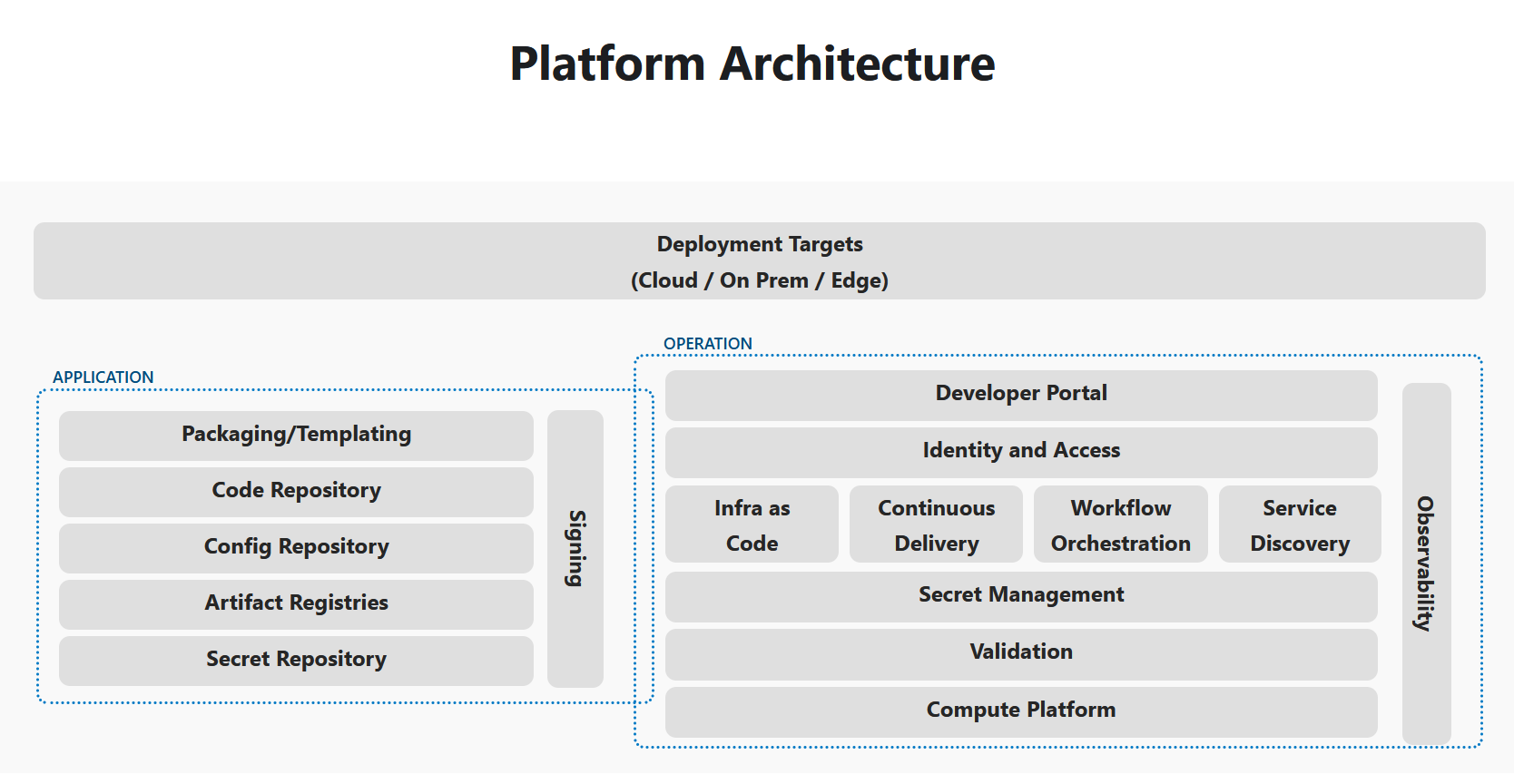

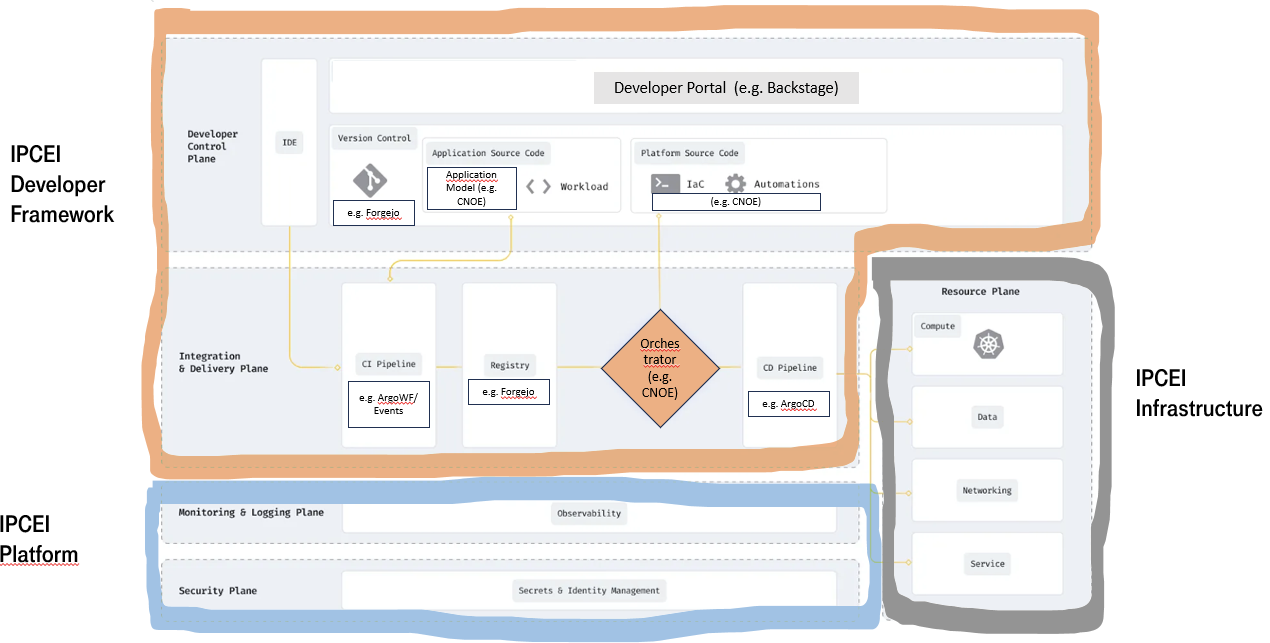

IPCEI-CIS Developer Framework is part of a cloud native technology stack. To design the capabilities and architecture of the Developer Framework we need to define the surounding context and internal building blocks, both aligned with cutting edge cloud native methodologies and research results.

In CNCF the discipline of building stacks to enhance the developer experience is called ‘Platform Engineering’

CNCF first asks why we need platform engineering:

The desire to refocus delivery teams on their core focus and reduce duplication of effort across the organisation has motivated enterprises to implement platforms for cloud-native computing. By investing in platforms, enterprises can:

- Reduce the cognitive load on product teams and thereby accelerate product development and delivery

- Improve reliability and resiliency of products relying on platform capabilities by dedicating experts to configure and manage them

- Accelerate product development and delivery by reusing and sharing platform tools and knowledge across many teams in an enterprise

- Reduce risk of security, regulatory and functional issues in products and services by governing platform capabilities and the users, tools and processes surrounding them

- Enable cost-effective and productive use of services from public clouds and other managed offerings by enabling delegation of implementations to those providers while maintaining control over user experience

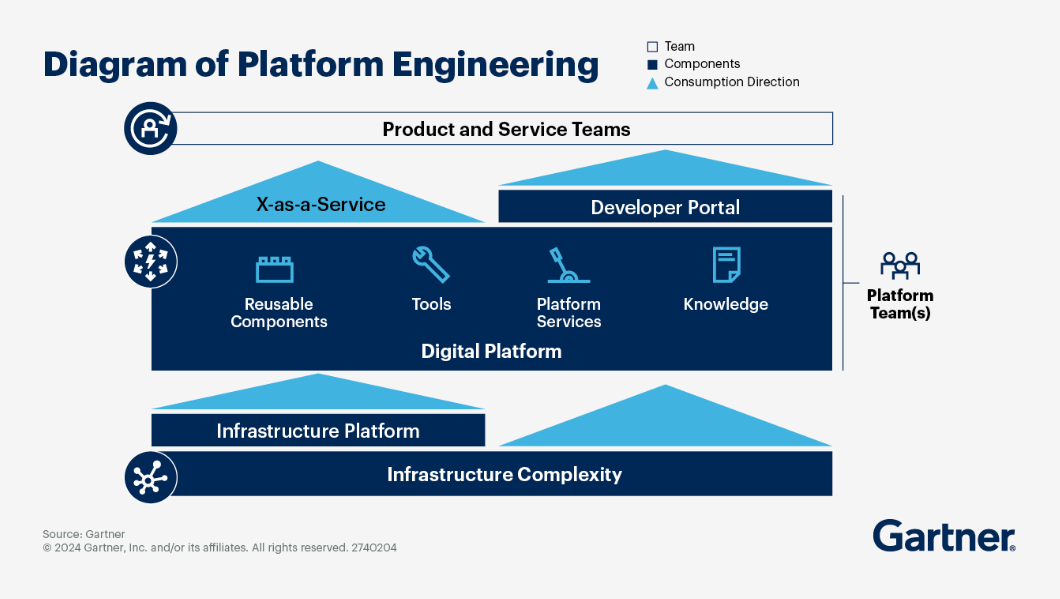

platformengineering.org’s Definition of Platform EngineeringPlatform engineering is the discipline of designing and building toolchains and workflows that enable self-service capabilities for software engineering organizations in the cloud-native era. Platform engineers provide an integrated product most often referred to as an “Internal Developer Platform” covering the operational necessities of the entire lifecycle of an application.

https://humanitec.com/blog/wtf-internal-developer-platform-vs-internal-developer-portal-vs-paas

In IPCEI-CIS right now (July 2024) we are primarily interested in understanding how IDPs are built as one option to implement an IDP is to build it ourselves.

The outcome of the Platform Engineering discipline is - created by the platform engineering team - a so called ‘Internal Developer Platform’.

One of the first sites focusing on this discipline was internaldeveloperplatform.org

The amount of available IDPs as product is rapidly growing.

[TODO] LIST OF IDPs

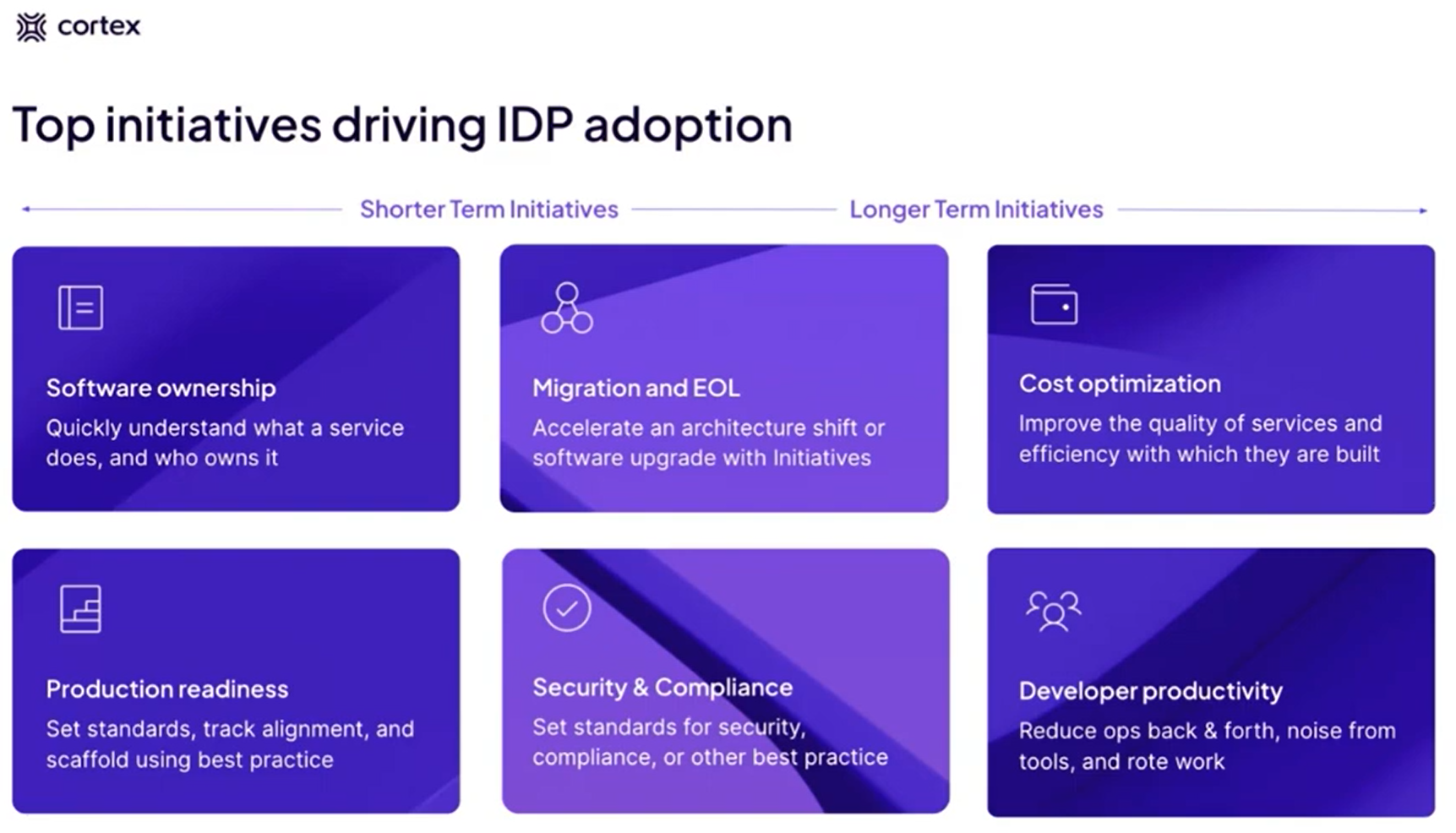

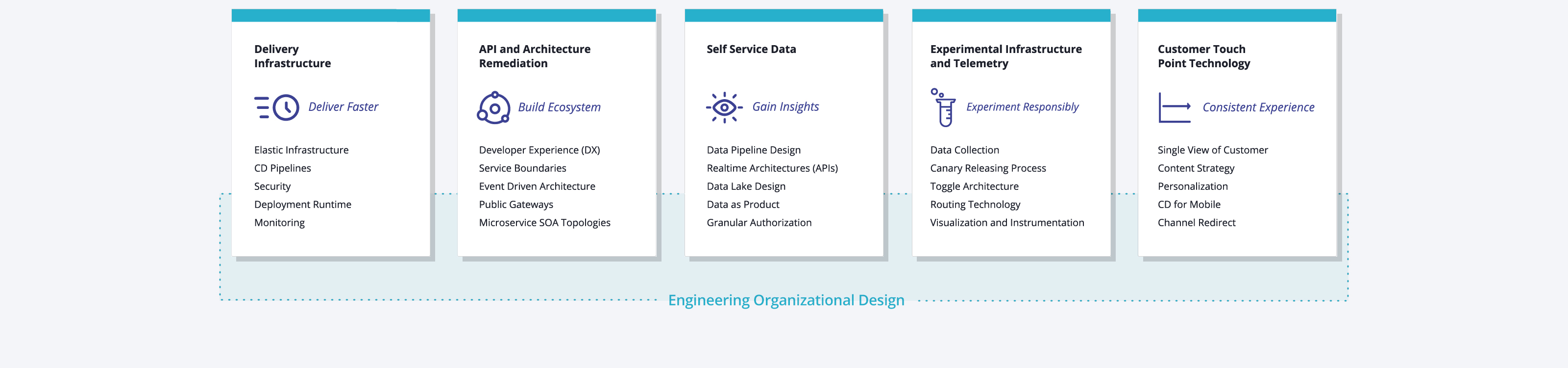

Cortex is talking about Use Cases (aka Initiatives): (or https://www.brighttalk.com/webcast/20257/601901)

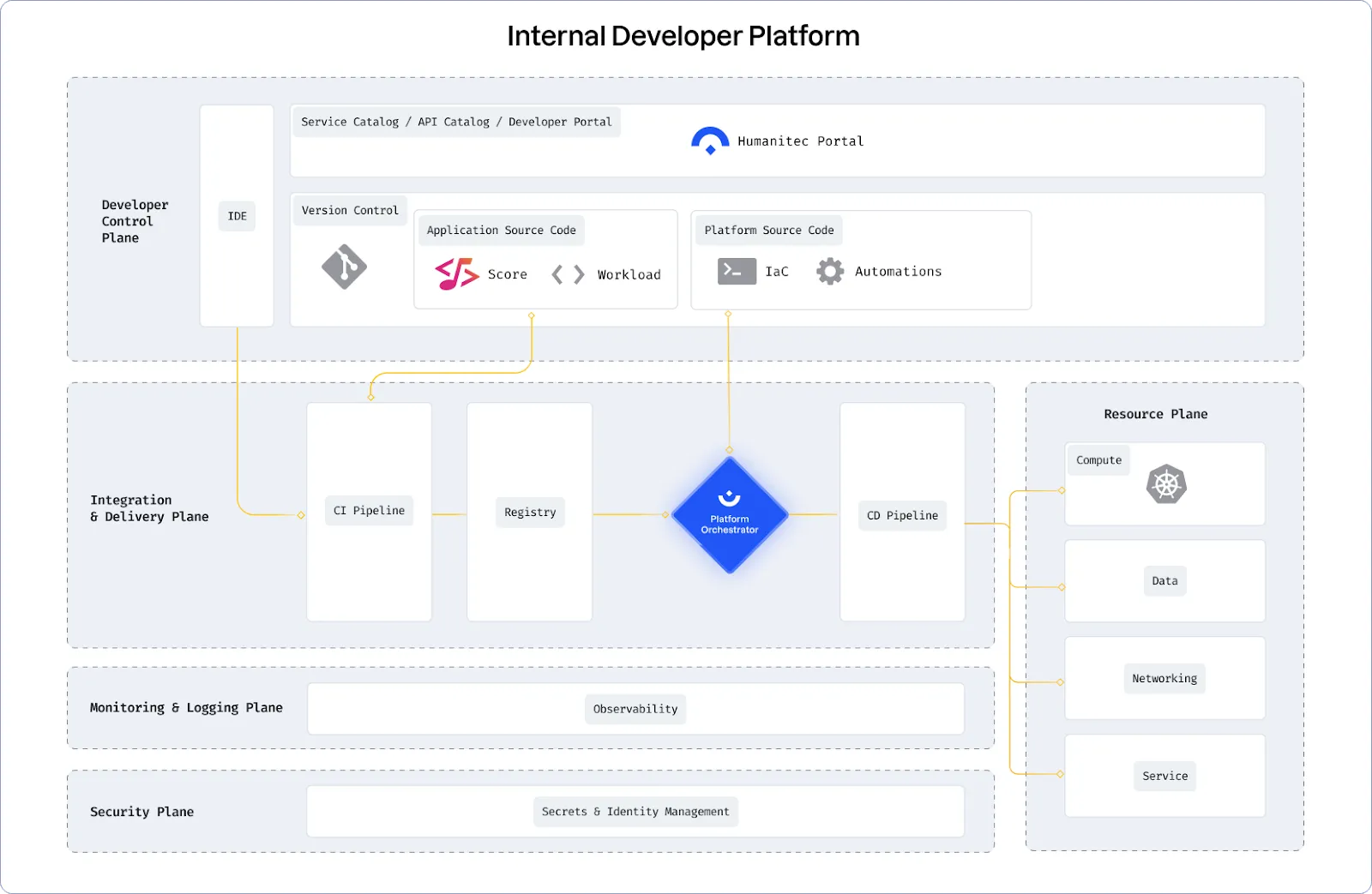

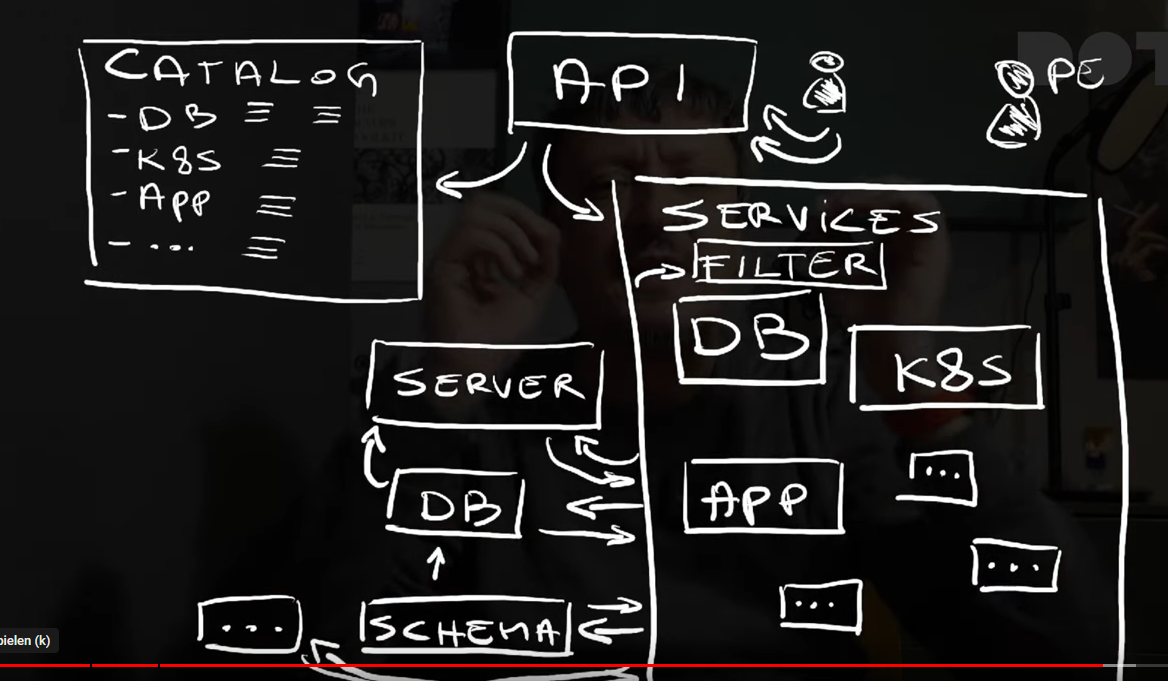

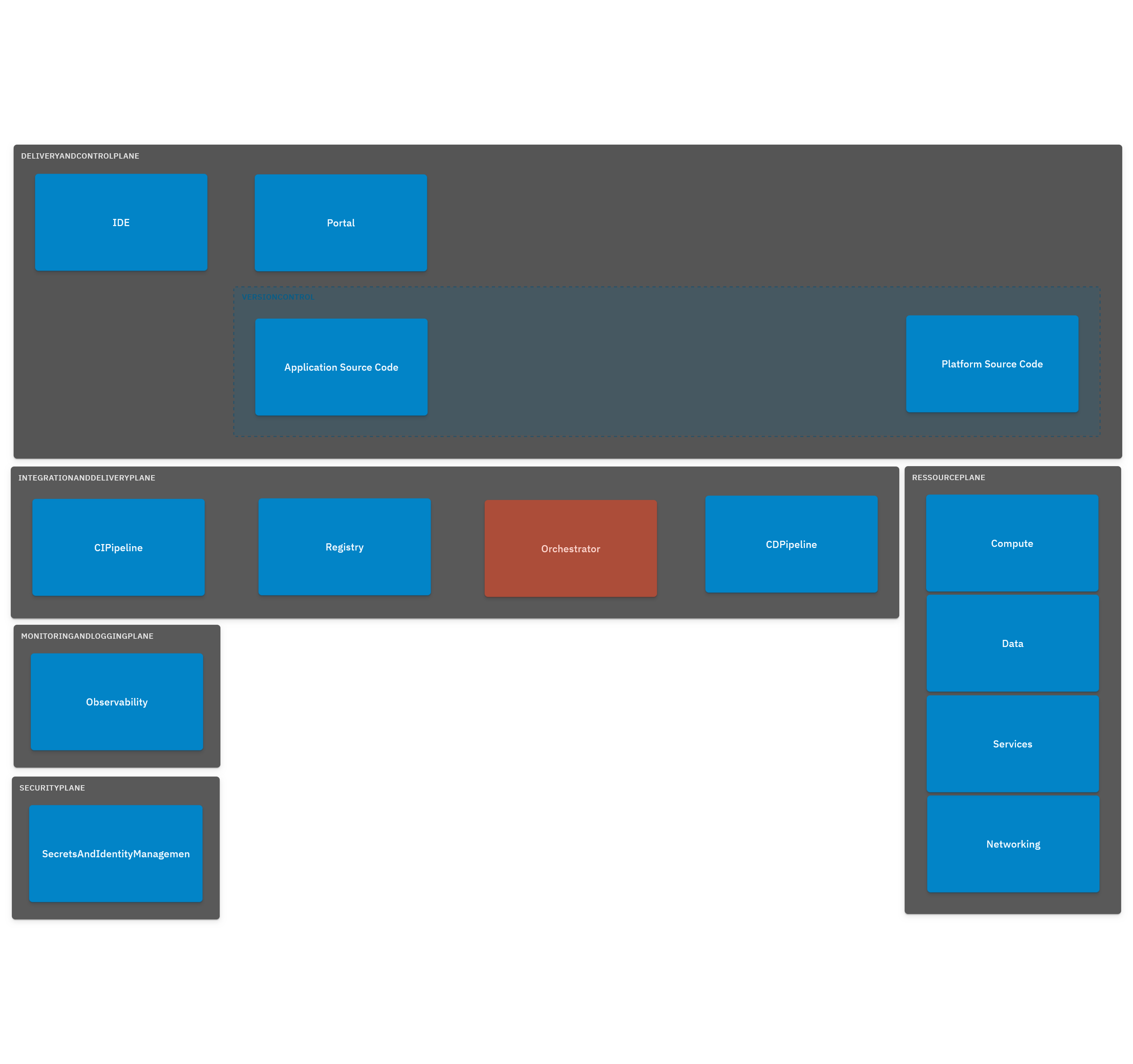

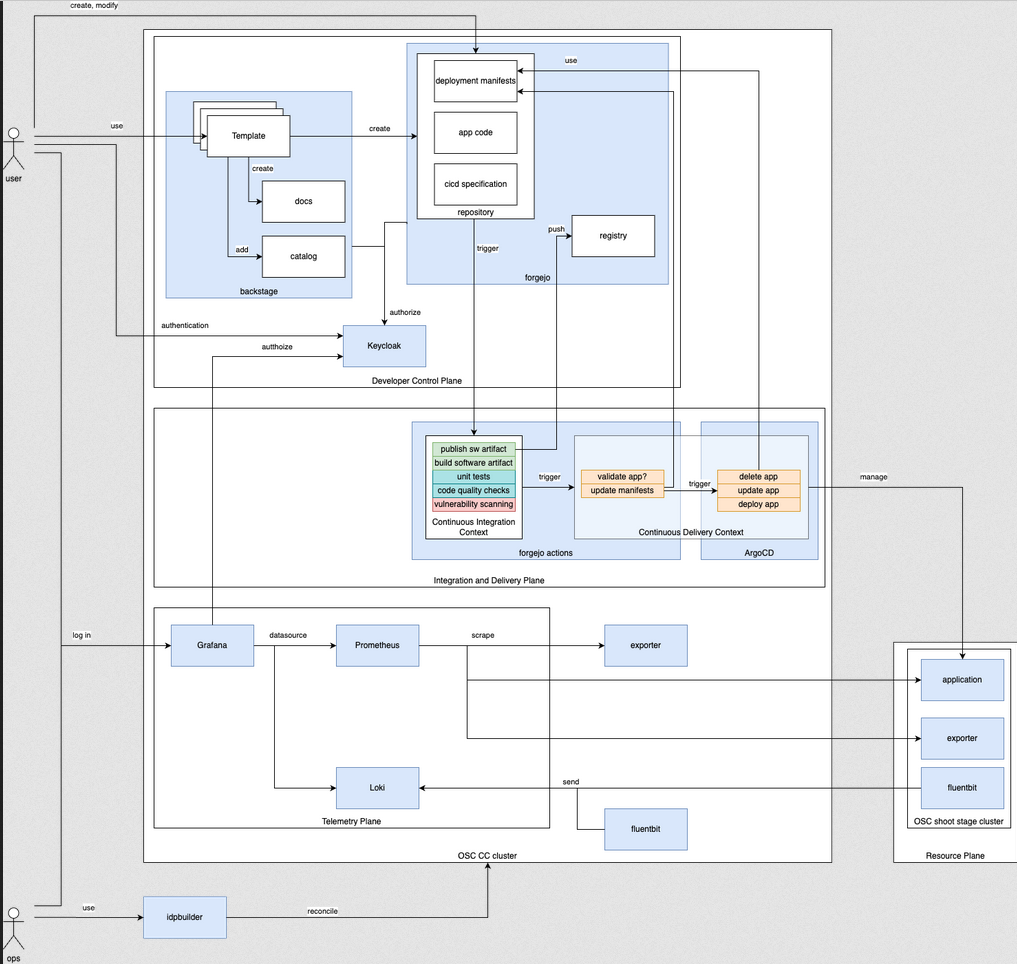

In a platform reference architecture there are five main planes that make up an IDP:

(source: https://humanitec.com/blog/wtf-internal-developer-platform-vs-internal-developer-portal-vs-paas)

https://github.com/humanitec-architecture

https://humanitec.com/reference-architectures

This page is in work. Right now we have in the index a collection of links describing and listing typical components and building blocks of platforms. Also we have a growing number of subsections regarding special types of components.

See also:

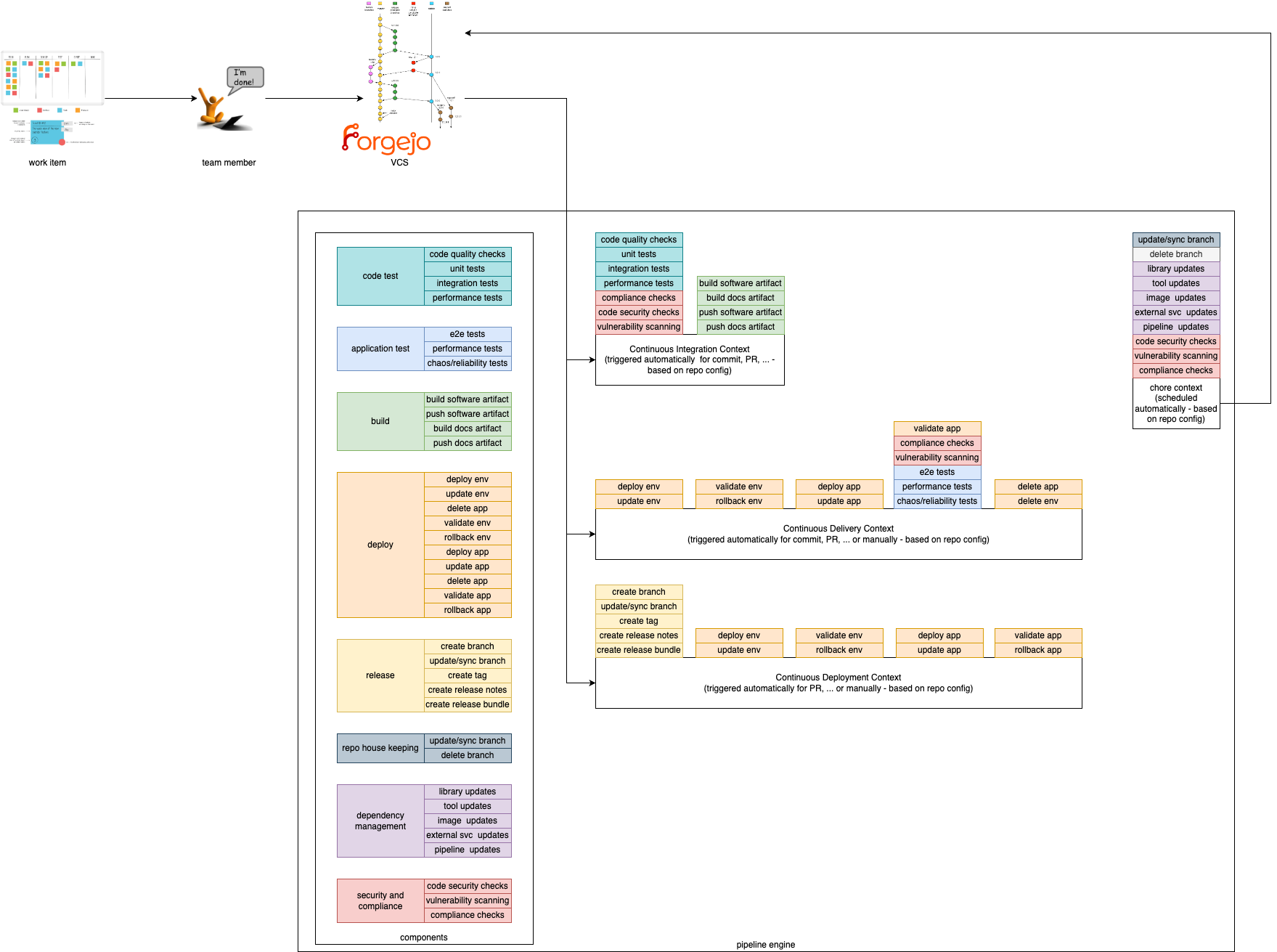

This document describes the concept of pipelining in the context of the Edge Developer Framework.

In order to provide a composable pipeline as part of the Edge Developer Framework (EDF), we have defined a set of concepts that can be used to create pipelines for different usage scenarios. These concepts are:

Pipeline Contexts define the context in which a pipeline execution is run. Typically, a context corresponds to a specific step within the software development lifecycle, such as building and testing code, deploying and testing code in staging environments, or releasing code. Contexts define which components are used, in which order, and the environment in which they are executed.

Components are the building blocks, which are used in the pipeline. They define specific steps that are executed in a pipeline such as compiling code, running tests, or deploying an application.

We provide 4 Pipeline Contexts that can be used to create pipelines for different usage scenarios. The contexts can be described as the golden path, which is fully configurable and extenable by the users.

Pipeline runs with a given context can be triggered by different actions. For example, a pipeline run with the Continuous Integration context can be triggered by a commit to a repository, while a pipeline run with the Continuous Delivery context could be triggered by merging a pull request to a specific branch.

This context is focused on running tests and checks on every commit to a repository. It is used to ensure that the codebase is always in a working state and that new changes do not break existing functionality. Tests within this context are typically fast and lightweight, and are used to catch simple errors such as syntax errors, typos, and basic logic errors. Static vulnerability and compliance checks can also be performed in this context.

This context is focused on deploying code to a (ephermal) staging environment after its static checks have been performed. It is used to ensure that the codebase is always deployable and that new changes can be easily reviewed by stakeholders. Tests within this context are typically more comprehensive than those in the Continuous Integration context, and handle more complex scenarios such as integration tests and end-to-end tests. Additionally, live security and compliance checks can be performed in this context.

This context is focused on deploying code to a production environment and/or publishing artefacts after static checks have been performed.

This context focuses on measures that need to be carried out regularly (e.g. security or compliance scans). They are used to ensure the robustness, security and efficiency of software projects. They enable teams to maintain high standards of quality and reliability while minimizing risks and allowing developers to focus on more critical and creative aspects of development, increasing overall productivity and satisfaction.

Components are the composable and self-contained building blocks for the contexts described above. The aim is to cover most (common) use cases for application teams and make them particularly easy to use by following our golden paths. This way, application teams only have to include and configure the functionalities they actually need. An additional benefit is that this allows for easy extensibility. If a desired functionality has not been implemented as a component, application teams can simply add their own.

Components must be as small as possible and follow the same concepts of software development and deployment as any other software product. In particular, they must have the following characteristics:

In the EDF components are divided into different categories. Each category contains components that perform similar actions. For example, the build category contains components that compile code, while the deploy category contains components that automate the management of the artefacts created in a production-like system.

Note: Components are comparable to interfaces in programming. Each component defines a certain behaviour, but the actual implementation of these actions depends on the specific codebase and environment.

For example, the

buildcomponent defines the action of compiling code, but the actual build process depends on the programming language and build tools used in the project. Thevulnerability scanningcomponent will likely execute different tools and interact with different APIs depending on the context in which it is executed.

Build components are used to compile code. They can be used to compile code written in different programming languages, and can be used to compile code for different platforms.

These components define tests that are run on the codebase. They are used to ensure that the codebase is always in a working state and that new changes do not break existing functionality. Tests within this category are typically fast and lightweight, and are used to catch simple errors such as syntax errors, typos, and basic logic errors. Tests must be executable in isolation, and do not require external dependencies such as databases or network connections.

Application tests are tests, which run the code in a real execution environment, and provide external dependencies. These tests are typically more comprehensive than those in the Code Test category, and handle more complex scenarios such as integration tests and end-to-end tests.

Deploy components are used to deploy code to different environments, but can also be used to publish artifacts. They are typically used in the Continuous Delivery and Continuous Deployment contexts.

Release components are used to create releases of the codebase. They can be used to create tags in the repository, create release notes, or perform other tasks related to releasing code. They are typically used in the Continuous Deployment context.

Repo house keeping components are used to manage the repository. They can be used to clean up old branches, update the repository’s README file, or perform other maintenance tasks. They can also be used to handle issues, such as automatically closing stale issues.

Dependency management is used to automate the process of managing dependencies in a codebase. It can be used to create pull requests with updated dependencies, or to automatically update dependencies in a codebase.

Security and compliance components are used to ensure that the codebase meets security and compliance requirements. They can be used to scan the codebase for vulnerabilities, check for compliance with coding standards, or perform other security and compliance checks. Depending on the context, different tools can be used to accomplish scanning. In the Continuous Integration context, static code analysis can be used to scan the codebase for vulnerabilities, while in the Continuous Delivery context, live security and compliance checks can be performed.

We have Gitops these days …. so there is a desired state of an environment in a repo and a reconciling mechanism done by Gitops to enforce this state on the environemnt.

There is no continuous whatever step inbetween … Gitops is just ‘overwriting’ (to avoid saying ‘delivering’ or ‘deploying’) the environment with the new state.

This means whatever quality ensuring steps have to take part before ‘overwriting’ have to be defined as state changer in the repos, not in the environments.

Conclusio: I think we only have three contexts, or let’s say we don’t have the contect ‘continuous delivery’

This page is in work. Right now we have in the index a collection of links describing developer portals.

https://www.getport.io/compare/backstage-vs-port

‘Platform Orchestration’ is first mentionned by Thoughtworks in Sept 2023

Here are capability domains to consider when building platforms for cloud-native computing:

An Internal Developer Platform (IDP) should be built to cover 5 Core Components:

| Core Component | Short Description |

|---|---|

| Application Configuration Management | Manage application configuration in a dynamic, scalable and reliable way. |

| Infrastructure Orchestration | Orchestrate your infrastructure in a dynamic and intelligent way depending on the context. |

| Environment Management | Enable developers to create new and fully provisioned environments whenever needed. |

| Deployment Management | Implement a delivery pipeline for Continuous Delivery or even Continuous Deployment (CD). |

| Role-Based Access Control | Manage who can do what in a scalable way. |

The goal for the CNOE framework is to bring together a cohort of enterprises operating at the same scale so that they can navigate their operational technology decisions together, de-risk their tooling bets, coordinate contribution, and offer guidance to large enterprises on which CNCF technologies to use together to achieve the best cloud efficiencies.

See https://cnoe.io/docs/reference-implementation/integrations/reference-impl:

# in a local terminal with docker and kind

idpbuilder create --use-path-routing --log-level debug --package-dir https://github.com/cnoe-io/stacks//ref-implementation

time=2024-08-05T14:48:33.348+02:00 level=INFO msg="Creating kind cluster" logger=setup

time=2024-08-05T14:48:33.371+02:00 level=INFO msg="Runtime detected" logger=setup provider=docker

########################### Our kind config ############################

# Kind kubernetes release images https://github.com/kubernetes-sigs/kind/releases

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

nodes:

- role: control-plane

image: "kindest/node:v1.29.2"

kubeadmConfigPatches:

- |

kind: InitConfiguration

nodeRegistration:

kubeletExtraArgs:

node-labels: "ingress-ready=true"

extraPortMappings:

- containerPort: 443

hostPort: 8443

protocol: TCP

containerdConfigPatches:

- |-

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."gitea.cnoe.localtest.me:8443"]

endpoint = ["https://gitea.cnoe.localtest.me"]

[plugins."io.containerd.grpc.v1.cri".registry.configs."gitea.cnoe.localtest.me".tls]

insecure_skip_verify = true

######################### config end ############################

time=2024-08-05T14:48:33.394+02:00 level=INFO msg="Creating kind cluster" logger=setup cluster=localdev

time=2024-08-05T14:48:53.680+02:00 level=INFO msg="Done creating cluster" logger=setup cluster=localdev

time=2024-08-05T14:48:53.905+02:00 level=DEBUG+3 msg="Getting Kube config" logger=setup

time=2024-08-05T14:48:53.908+02:00 level=DEBUG+3 msg="Getting Kube client" logger=setup

time=2024-08-05T14:48:53.908+02:00 level=INFO msg="Adding CRDs to the cluster" logger=setup

time=2024-08-05T14:48:53.948+02:00 level=DEBUG+3 msg="crd not yet established, waiting." "crd name"=custompackages.idpbuilder.cnoe.io

time=2024-08-05T14:48:53.954+02:00 level=DEBUG+3 msg="crd not yet established, waiting." "crd name"=custompackages.idpbuilder.cnoe.io

time=2024-08-05T14:48:53.957+02:00 level=DEBUG+3 msg="crd not yet established, waiting." "crd name"=custompackages.idpbuilder.cnoe.io

time=2024-08-05T14:48:53.981+02:00 level=DEBUG+3 msg="crd not yet established, waiting." "crd name"=gitrepositories.idpbuilder.cnoe.io

time=2024-08-05T14:48:53.985+02:00 level=DEBUG+3 msg="crd not yet established, waiting." "crd name"=gitrepositories.idpbuilder.cnoe.io

time=2024-08-05T14:48:54.734+02:00 level=DEBUG+3 msg="Creating controller manager" logger=setup

time=2024-08-05T14:48:54.737+02:00 level=DEBUG+3 msg="Created temp directory for cloning repositories" logger=setup dir=/tmp/idpbuilder-localdev-2865684949

time=2024-08-05T14:48:54.737+02:00 level=INFO msg="Setting up CoreDNS" logger=setup

time=2024-08-05T14:48:54.798+02:00 level=INFO msg="Setting up TLS certificate" logger=setup

time=2024-08-05T14:48:54.811+02:00 level=DEBUG+3 msg="Creating/getting certificate" logger=setup host=cnoe.localtest.me sans="[cnoe.localtest.me *.cnoe.localtest.me]"

time=2024-08-05T14:48:54.825+02:00 level=DEBUG+3 msg="Creating secret for certificate" logger=setup host=cnoe.localtest.me

time=2024-08-05T14:48:54.832+02:00 level=DEBUG+3 msg="Running controllers" logger=setup

time=2024-08-05T14:48:54.833+02:00 level=DEBUG+3 msg="starting manager"

time=2024-08-05T14:48:54.833+02:00 level=INFO msg="Creating localbuild resource" logger=setup

time=2024-08-05T14:48:54.834+02:00 level=INFO msg="Starting EventSource" controller=custompackage controllerGroup=idpbuilder.cnoe.io controllerKind=CustomPackage source="kind source: *v1alpha1.CustomPackage"

time=2024-08-05T14:48:54.834+02:00 level=INFO msg="Starting EventSource" controller=gitrepository controllerGroup=idpbuilder.cnoe.io controllerKind=GitRepository source="kind source: *v1alpha1.GitRepository"

time=2024-08-05T14:48:54.834+02:00 level=INFO msg="Starting Controller" controller=custompackage controllerGroup=idpbuilder.cnoe.io controllerKind=CustomPackage

time=2024-08-05T14:48:54.834+02:00 level=INFO msg="Starting Controller" controller=gitrepository controllerGroup=idpbuilder.cnoe.io controllerKind=GitRepository

time=2024-08-05T14:48:54.834+02:00 level=INFO msg="Starting EventSource" controller=localbuild controllerGroup=idpbuilder.cnoe.io controllerKind=Localbuild source="kind source: *v1alpha1.Localbuild"

time=2024-08-05T14:48:54.834+02:00 level=INFO msg="Starting Controller" controller=localbuild controllerGroup=idpbuilder.cnoe.io controllerKind=Localbuild

time=2024-08-05T14:48:54.937+02:00 level=INFO msg="Starting workers" controller=gitrepository controllerGroup=idpbuilder.cnoe.io controllerKind=GitRepository "worker count"=1

time=2024-08-05T14:48:54.937+02:00 level=INFO msg="Starting workers" controller=custompackage controllerGroup=idpbuilder.cnoe.io controllerKind=CustomPackage "worker count"=1

time=2024-08-05T14:48:54.937+02:00 level=INFO msg="Starting workers" controller=localbuild controllerGroup=idpbuilder.cnoe.io controllerKind=Localbuild "worker count"=1

time=2024-08-05T14:48:56.863+02:00 level=DEBUG+3 msg=Reconciling controller=localbuild controllerGroup=idpbuilder.cnoe.io controllerKind=Localbuild Localbuild.name=localdev namespace="" name=localdev reconcileID=cc0e5b9d-4952-4fd1-9d62-6d9821f180be resource=/localdev

time=2024-08-05T14:48:56.863+02:00 level=DEBUG+3 msg="Create or update namespace" controller=localbuild controllerGroup=idpbuilder.cnoe.io controllerKind=Localbuild Localbuild.name=localdev namespace="" name=localdev reconcileID=cc0e5b9d-4952-4fd1-9d62-6d9821f180be resource="&Namespace{ObjectMeta:{idpbuilder-localdev 0 0001-01-01 00:00:00 +0000 UTC <nil> <nil> map[] map[] [] [] []},Spec:NamespaceSpec{Finalizers:[],},Status:NamespaceStatus{Phase:,Conditions:[]NamespaceCondition{},},}"

time=2024-08-05T14:48:56.983+02:00 level=DEBUG+3 msg="installing core packages" controller=localbuild controllerGroup=idpbuilder.cnoe.io controllerKind=Localbuild Localbuild.name=localdev namespace="" name=localdev reconcileID=cc0e5b9d-4952-4fd1-9d62-6d9821f180be

time=2024-08-05T14:

...

time=2024-08-05T14:51:04.166+02:00 level=INFO msg="Stopping and waiting for webhooks"

time=2024-08-05T14:51:04.166+02:00 level=INFO msg="Stopping and waiting for HTTP servers"

time=2024-08-05T14:51:04.166+02:00 level=INFO msg="Wait completed, proceeding to shutdown the manager"

########################### Finished Creating IDP Successfully! ############################

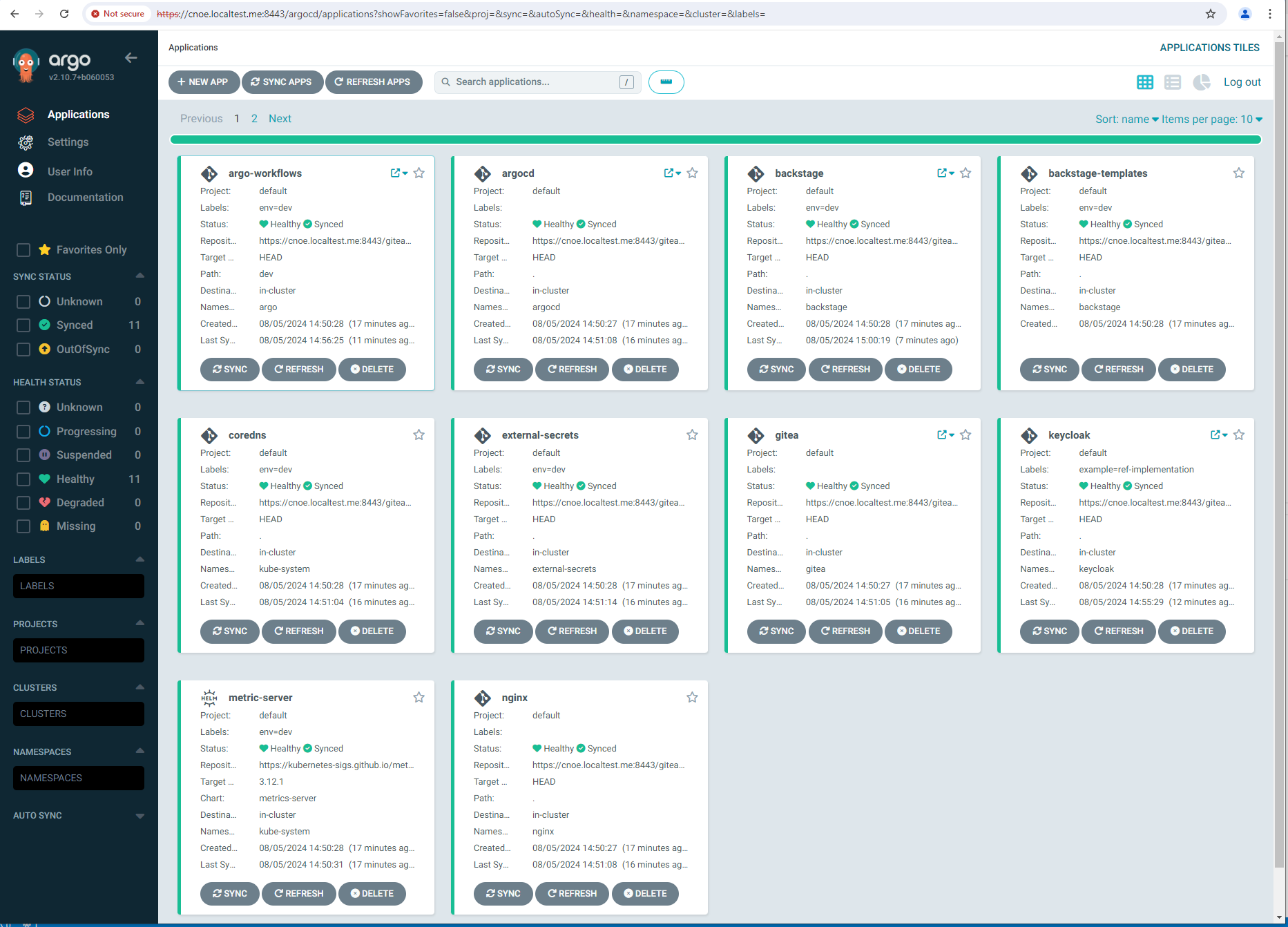

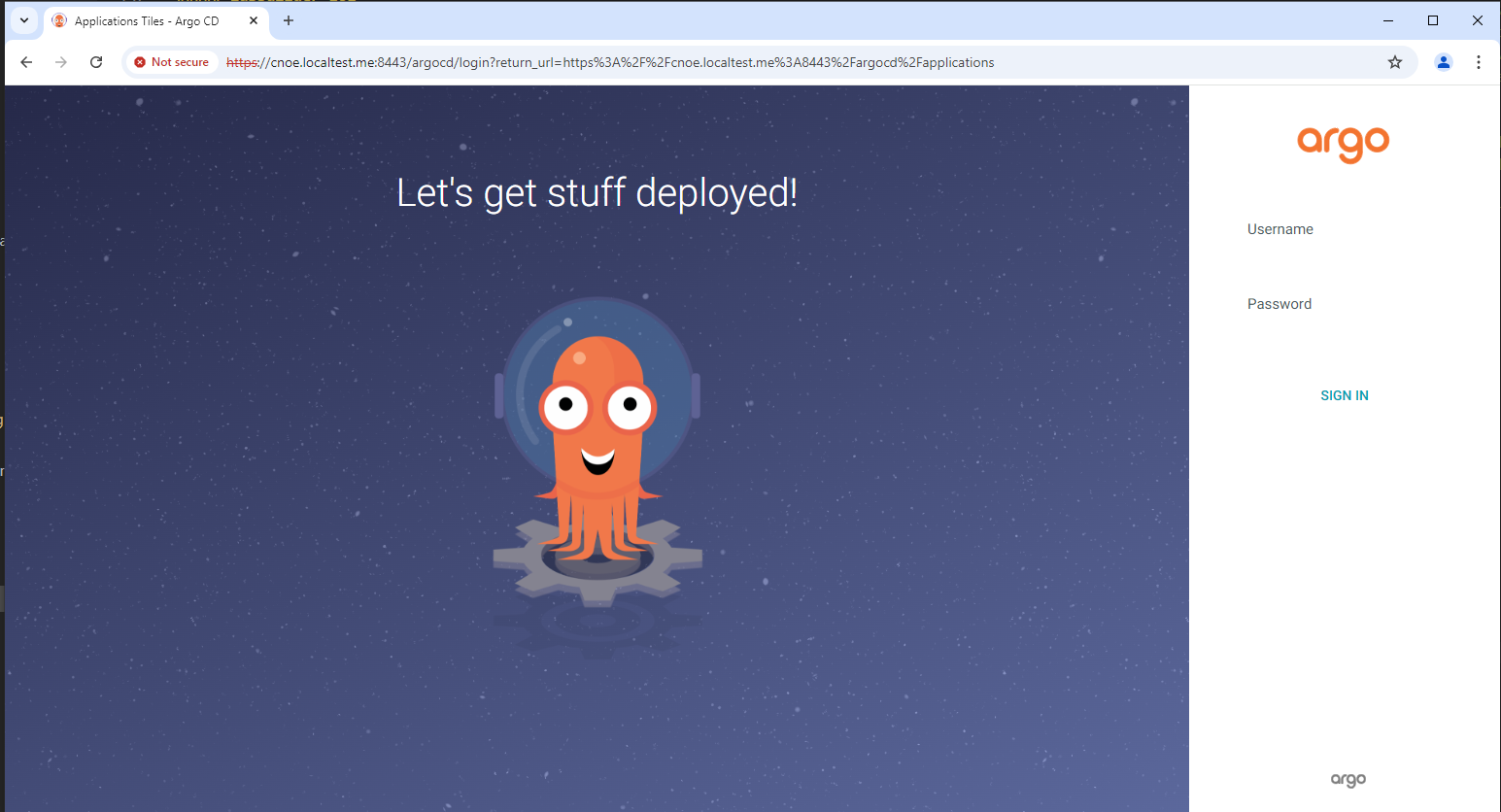

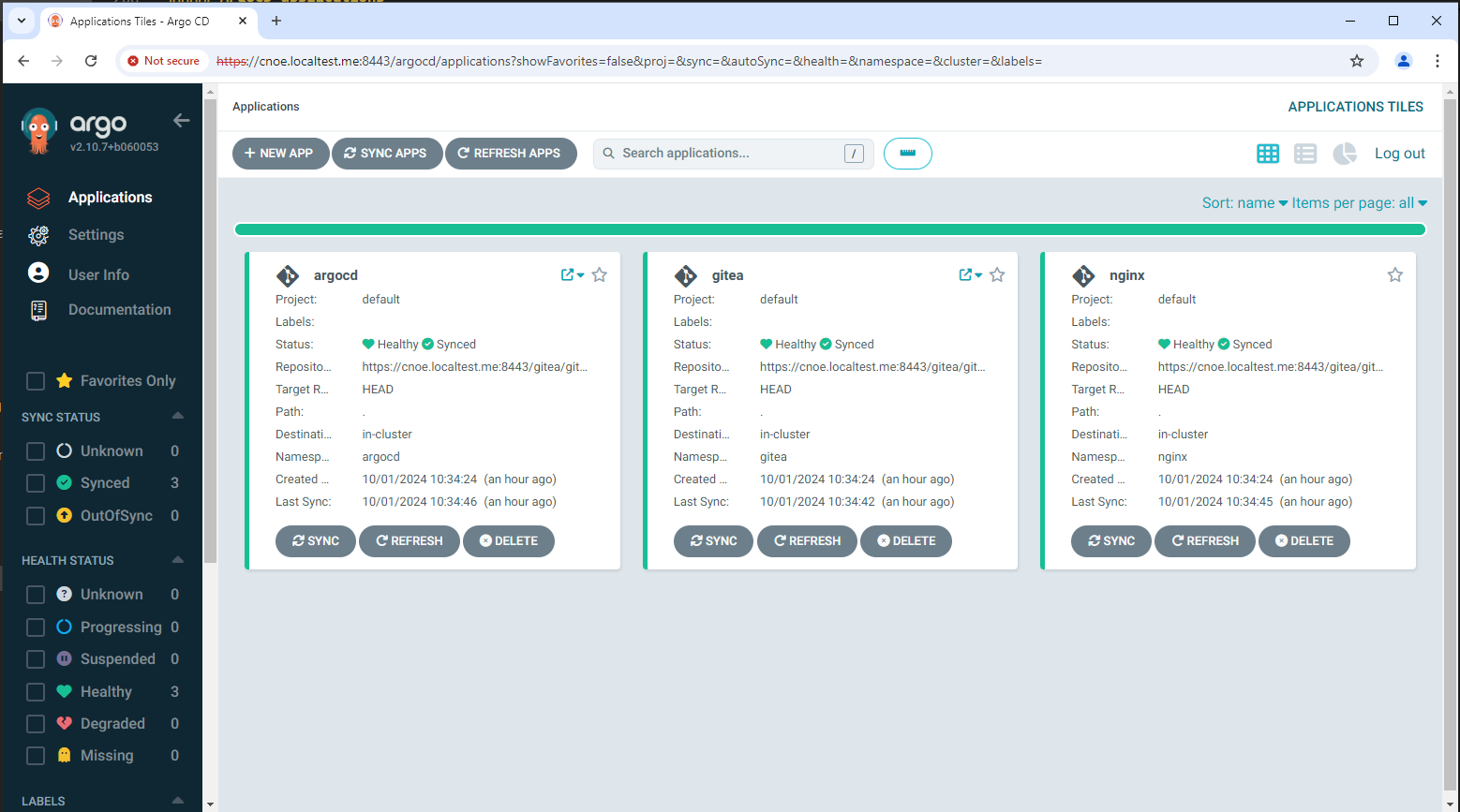

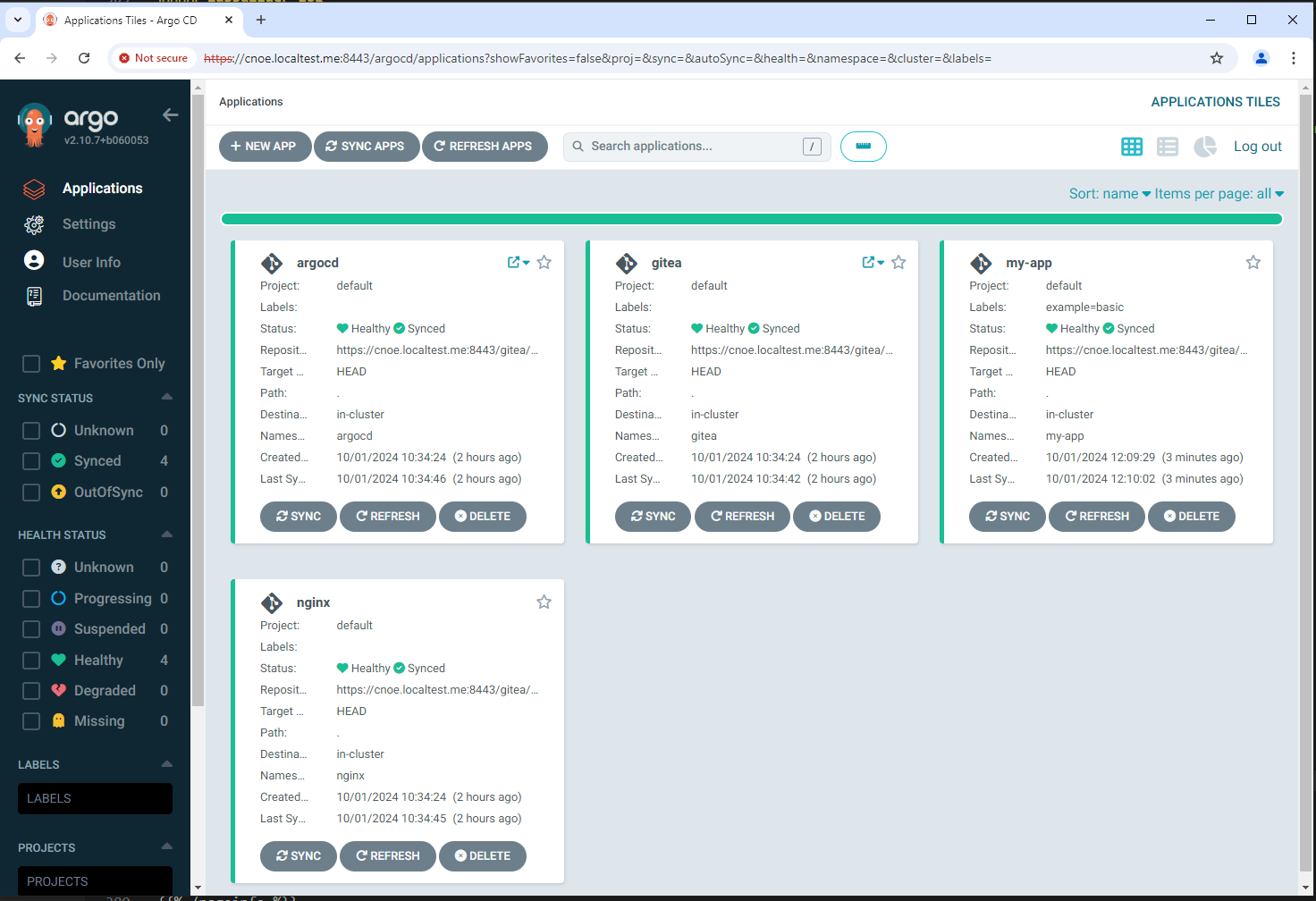

Can Access ArgoCD at https://cnoe.localtest.me:8443/argocd

Username: admin

Password can be retrieved by running: idpbuilder get secrets -p argocd

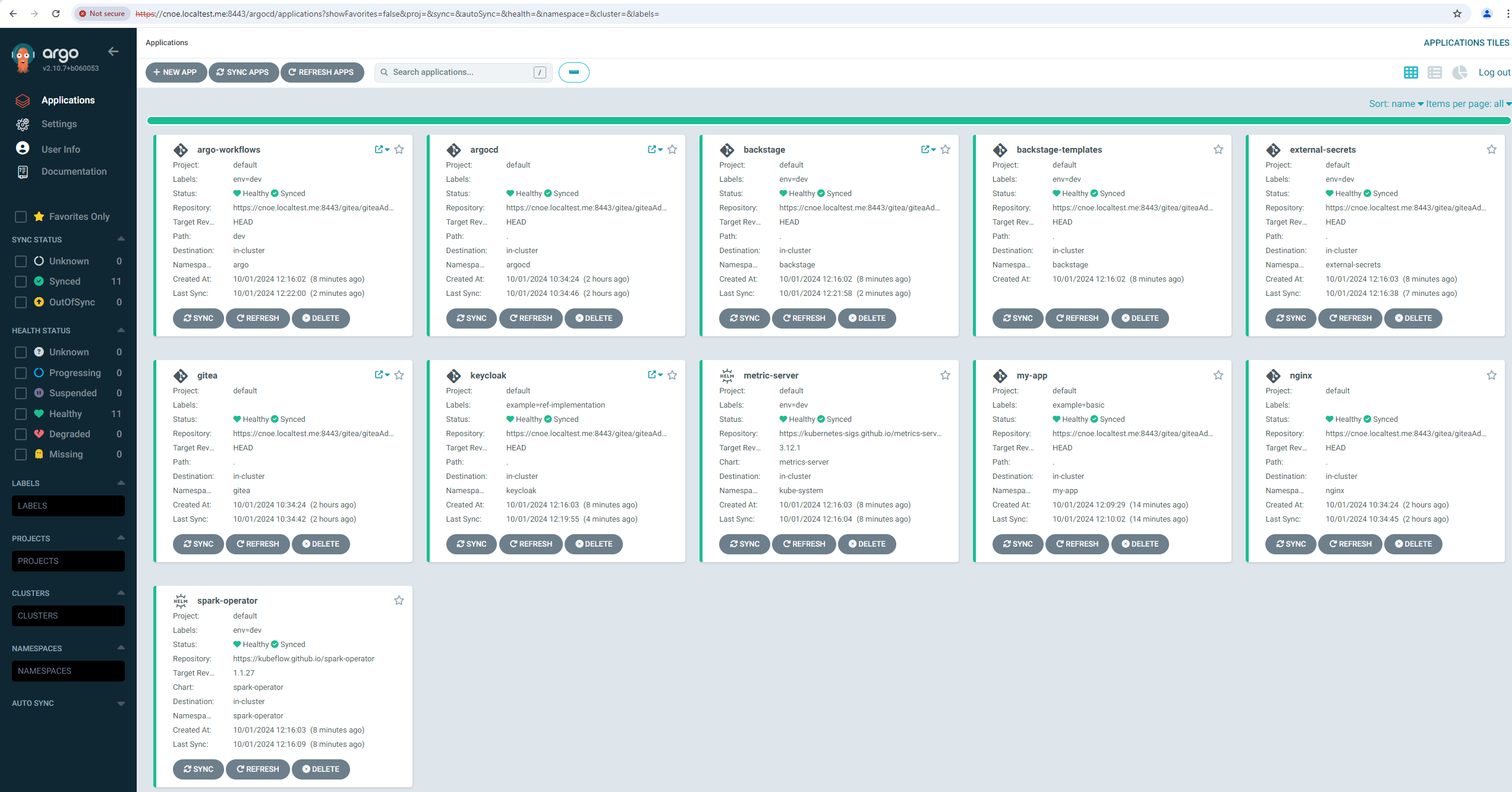

Nach ca. 10 minuten sind alle applications ausgerollt (am längsten dauert Backstage):

stl@ubuntu-vpn:~$ kubectl get applications -A

NAMESPACE NAME SYNC STATUS HEALTH STATUS

argocd argo-workflows Synced Healthy

argocd argocd Synced Healthy

argocd backstage Synced Healthy

argocd included-backstage-templates Synced Healthy

argocd coredns Synced Healthy

argocd external-secrets Synced Healthy

argocd gitea Synced Healthy

argocd keycloak Synced Healthy

argocd metric-server Synced Healthy

argocd nginx Synced Healthy

argocd spark-operator Synced Healthy

stl@ubuntu-vpn:~$ idpbuilder get secrets

---------------------------

Name: argocd-initial-admin-secret

Namespace: argocd

Data:

password : sPMdWiy0y0jhhveW

username : admin

---------------------------

Name: gitea-credential

Namespace: gitea

Data:

password : |iJ+8gG,(Jj?cc*G>%(i'OA7@(9ya3xTNLB{9k'G

username : giteaAdmin

---------------------------

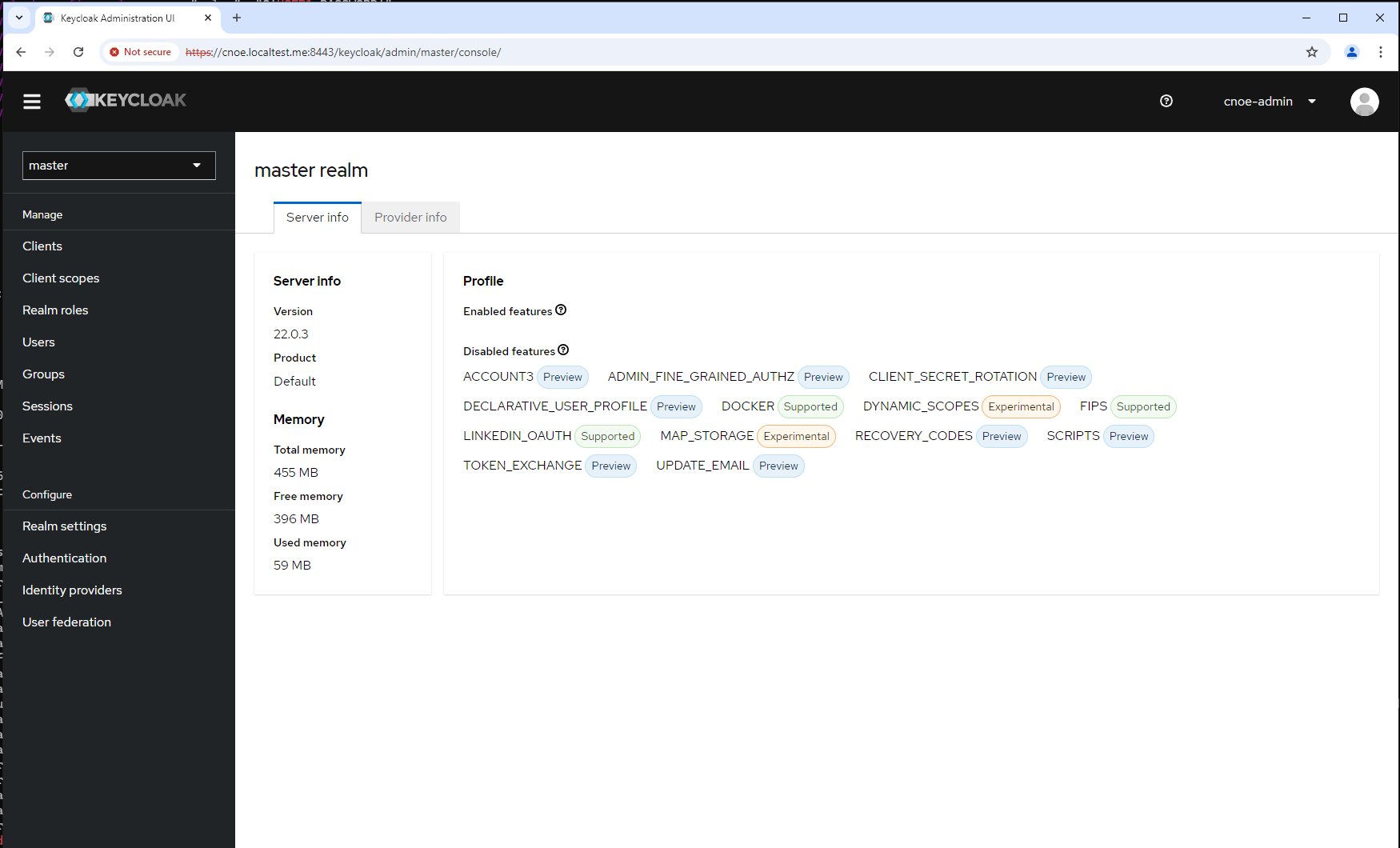

Name: keycloak-config

Namespace: keycloak

Data:

KC_DB_PASSWORD : ES-rOE6MXs09r+fAdXJOvaZJ5I-+nZ+hj7zF

KC_DB_USERNAME : keycloak

KEYCLOAK_ADMIN_PASSWORD : BBeMUUK1CdmhKWxZxDDa1c5A+/Z-dE/7UD4/

POSTGRES_DB : keycloak

POSTGRES_PASSWORD : ES-rOE6MXs09r+fAdXJOvaZJ5I-+nZ+hj7zF

POSTGRES_USER : keycloak

USER_PASSWORD : RwCHPvPVMu+fQM4L6W/q-Wq79MMP+3CN-Jeo

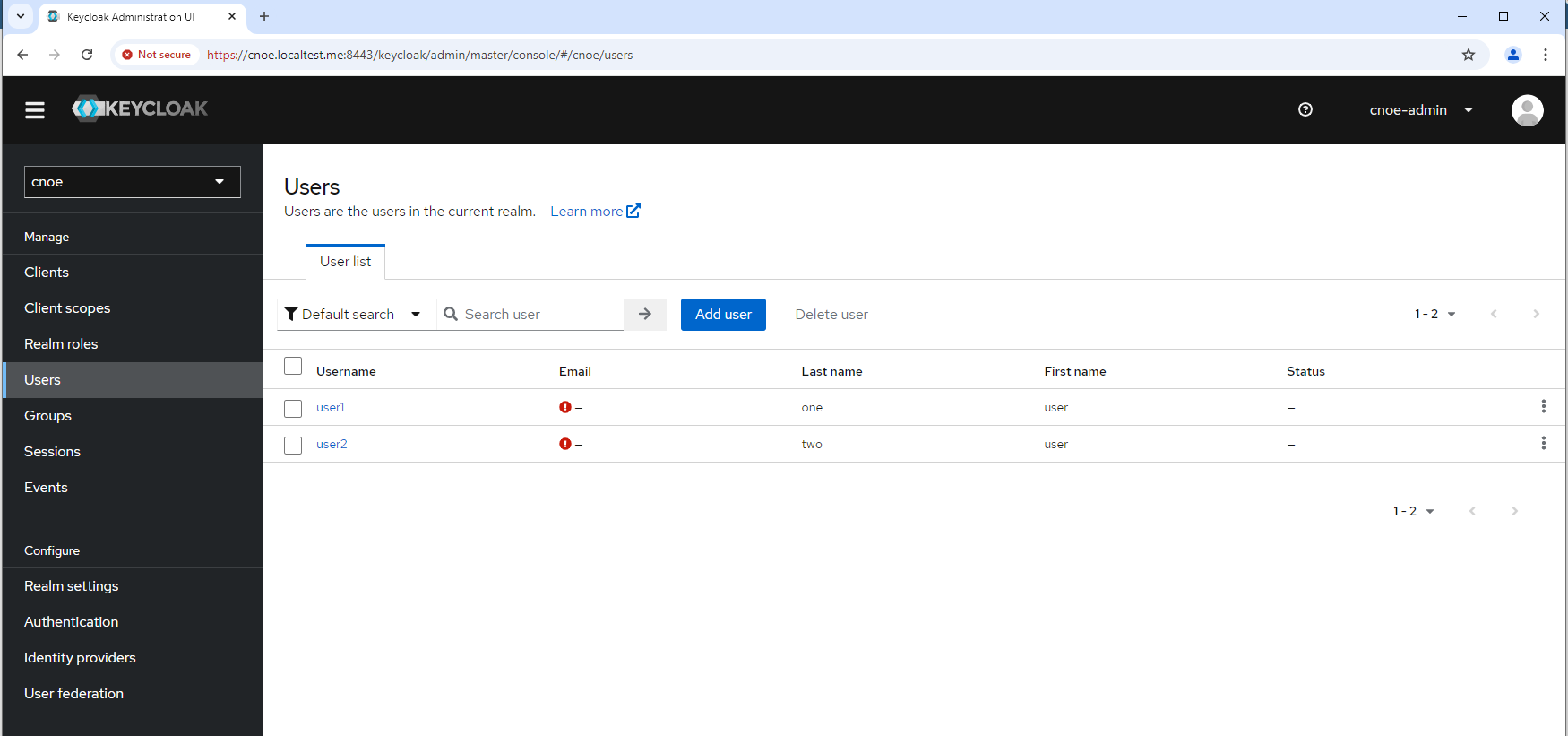

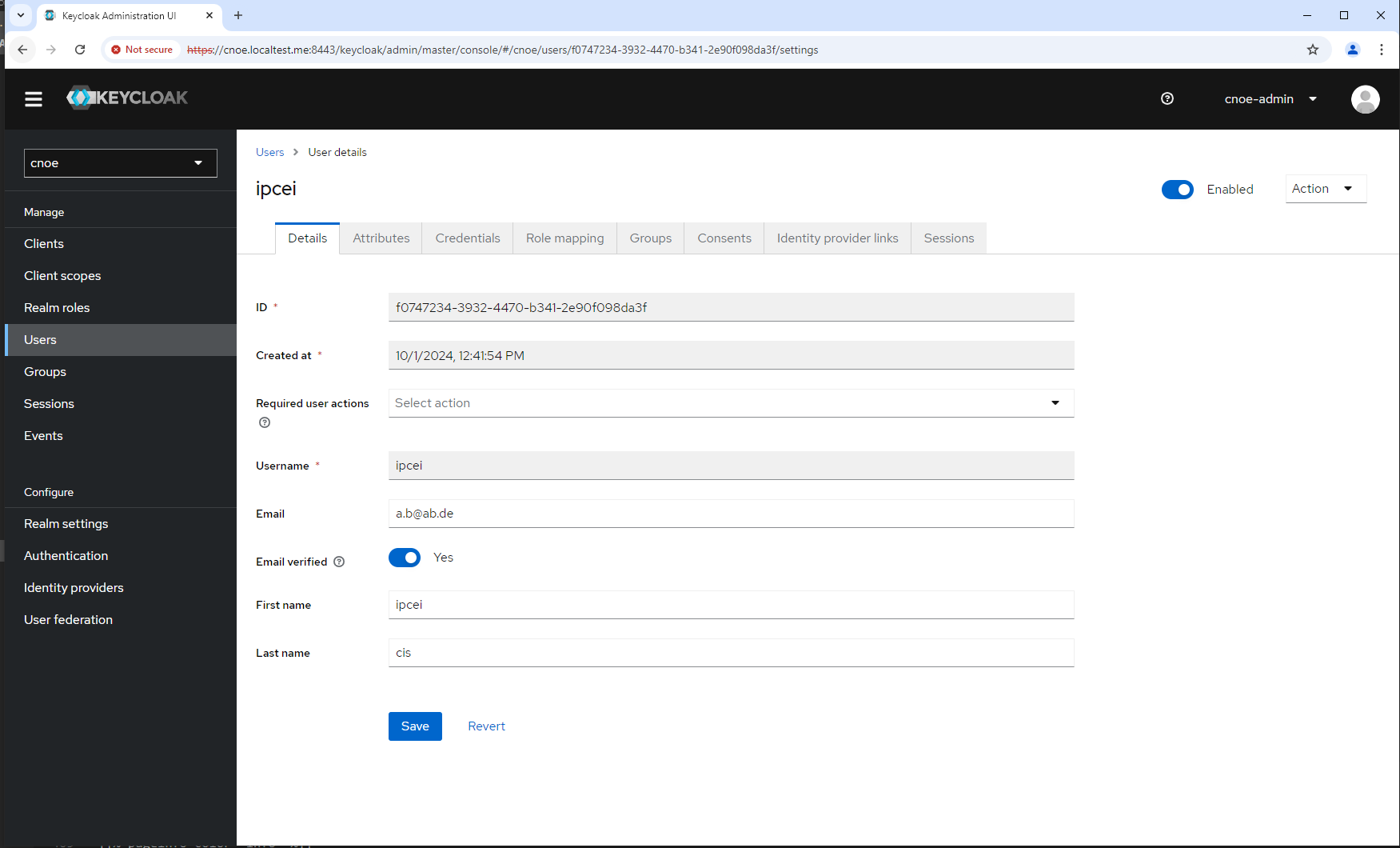

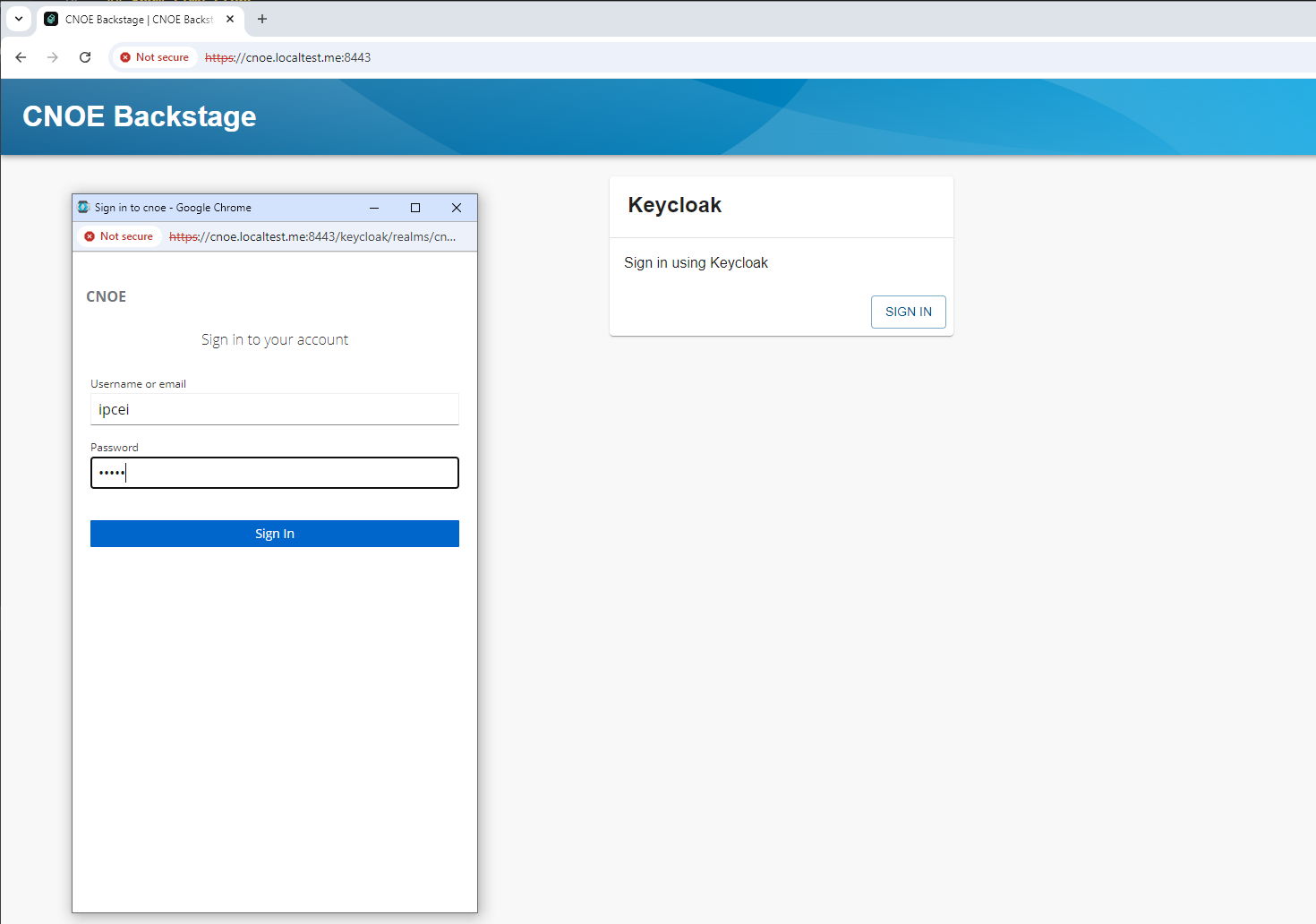

login geht mit den Creds, siehe oben:

tbd

This design documentation structure is inspired by the design of crossplane.

EDF is running as a controlplane - or let’s say an orchestration plane, correct wording is still to be defined - in a kubernetes cluster. Right now we have at least ArgoCD as controller of manifests which we provide as CNOE stacks of packages and standalone packages.

The implementation of EDF must be kubernetes provider agnostic. Thus each provider specific deployment dependency must be factored out into provider specific definitions or deployment procedures.

This implies that EDF must always be deployable into a local cluster, whereby by ’local’ we mean a cluster which is under the full control of the platform engineer, e.g. a kind cluster on their laptop.

When booting and reconciling the ‘final’ stack exectuting orchestrator (here: ArgoCD) needs to get rendered (or hydrated) presentations of the manifests.

It is not possible or unwanted that the orchestrator itself resolves dependencies or configuration values.

The hydration takes place for all target clouds/kubernetes providers. There is no ‘default’ or ‘special’ setup, like the Kind version.

This implies that in a development process there needs to be a build step hydrating the ArgoCD manifests for the targeted cloud.

Discussion from Robert and Stephan-Pierre in the context of stack development - there should be an easy way to have locally changed stacks propagated into the local running platform.

tbd

==== 1 =====

There is a core eDF which is self-contained and does not have any impelmented dependency to external platforms. eDF depends on abstractions. Each embdding into customer infrastructure works with adapters which implement the abstraction.

==== 2 =====

eDF has an own IAM. This may either hold the principals and permissions itself when there is no other IAM or proxy and map them when integrated into external enterprise IAMs.

Arch call from 4.12.24, Florian, Stefan, Stephan-Pierre

TN: Robert, Patrick, Stefan, Stephan 25.2.25, 13-14h

we need charts, because:

(*): marker: “jetzt hab’ ich das erste mal so halbwegs verstanden was ihr da überhaupt macht” (**) marker: ????

Sebastiano, Stefan, Robert, Patrick, Stephan 25.2.25, 14-15h

edp standalone ipcei edp

produktstruktur application model (cnoe, oam, score, xrd, …) api backstage (usage scenarios) pipelining ’everything as code’, deklaratives deployment, crossplane (bzw. orchestrator)

ggf: identity mgmt

nicht: security monitoring kubernetes internals

pipelining kubernetes-inetrnals api crossplane platforming - erzeugen von ressourcen in ‘clouds’ (e.g. gcp, und hetzner :-) )

security identity-mgmt (SSI) EaC und alles andere macht mir auch total spass!

Robert worked on the kindserver reconciling.

He got aware that crossplane is able to delete clusters when drift is detected. This mustnt happen for sure in productive clusters.

Even worse, if crossplane did delete the cluster and then set it up again correctly, argocd would be out of sync and had no idea by default how to relate the old and new cluster.

* edgeXR erlaubt keine persistenz

* openai, llm als abstarktion nicht vorhanden

* momentan nur compute vorhanden

* roaming von applikationen --> EDP muss das unterstützen

* anwendungsfall: sprachmodell übersetzt design-artifakte in architektur, dann wird provisionierung ermöglicht

? Applikations-modelle ? zusammenhang mit golden paths * zB für reines compute faas

WiP - this is in work.

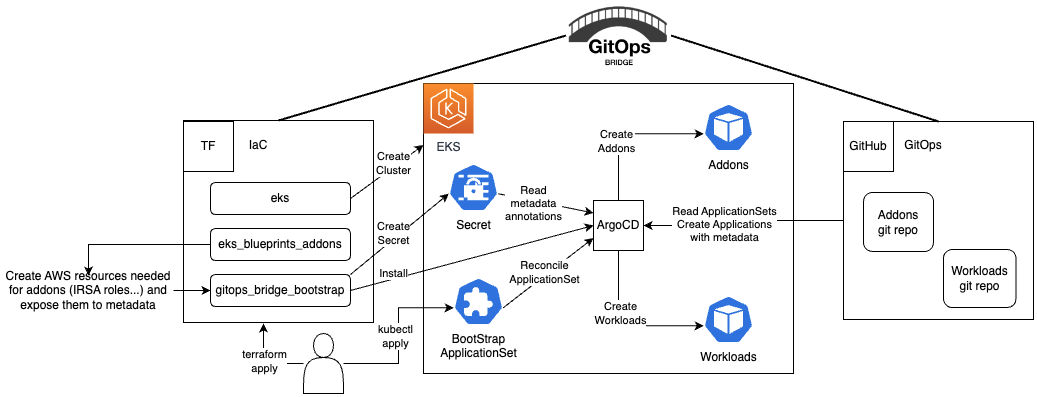

What kind of Gitops do we have with idpbuilder/CNOE ?

https://github.com/gitops-bridge-dev/gitops-bridge

WiP - this is in work.

What deployment scenarios do we have with idpbuilder/CNOE ?

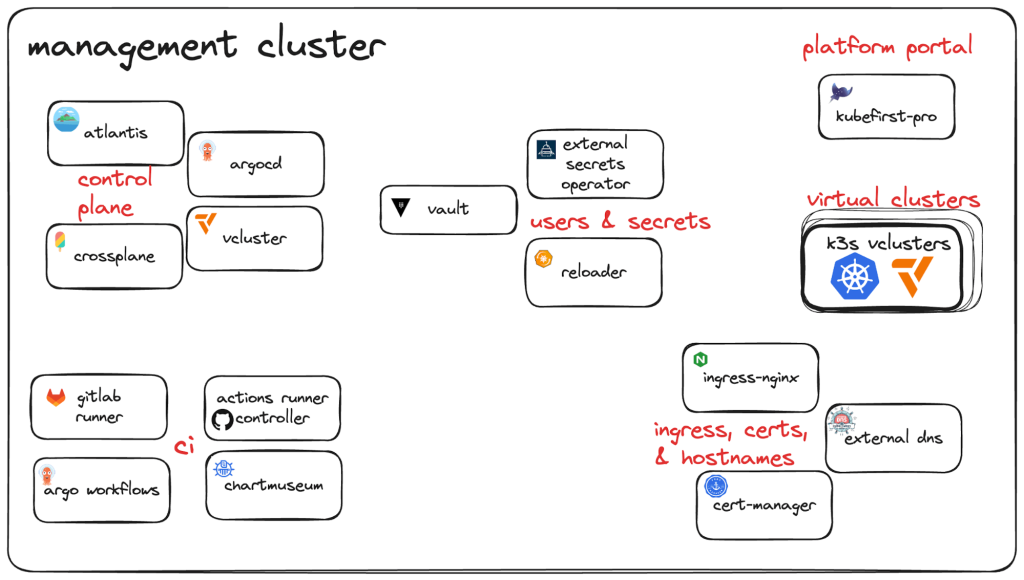

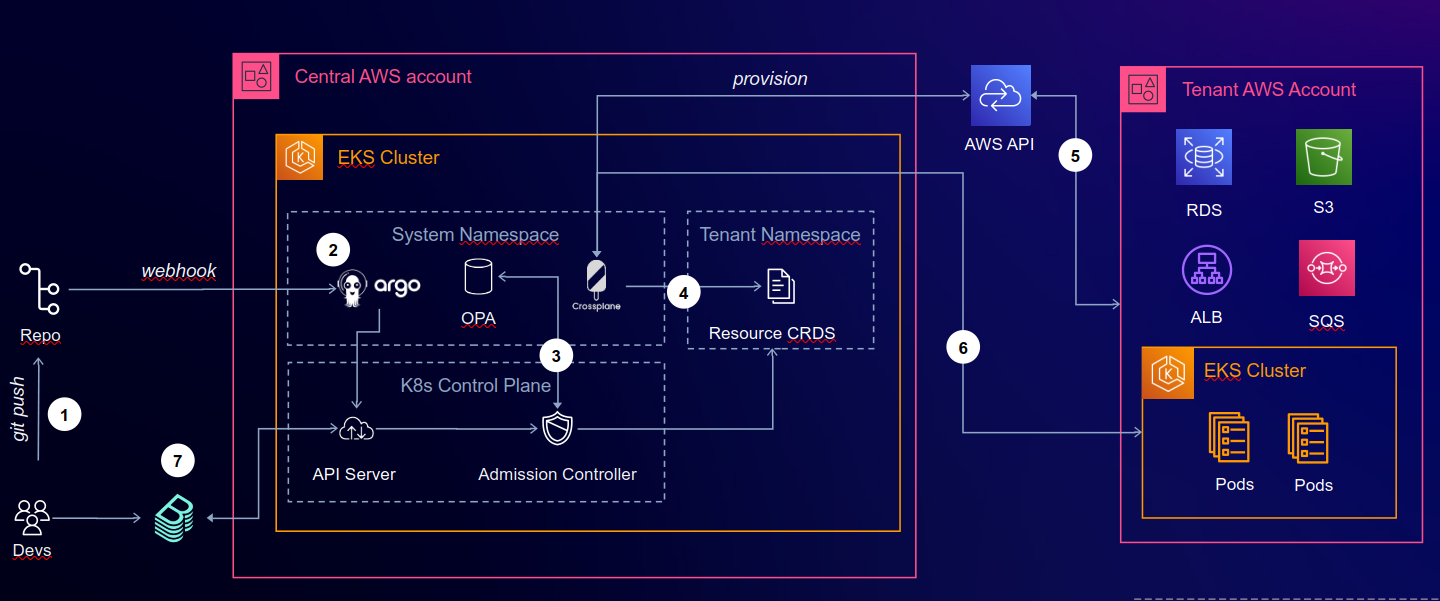

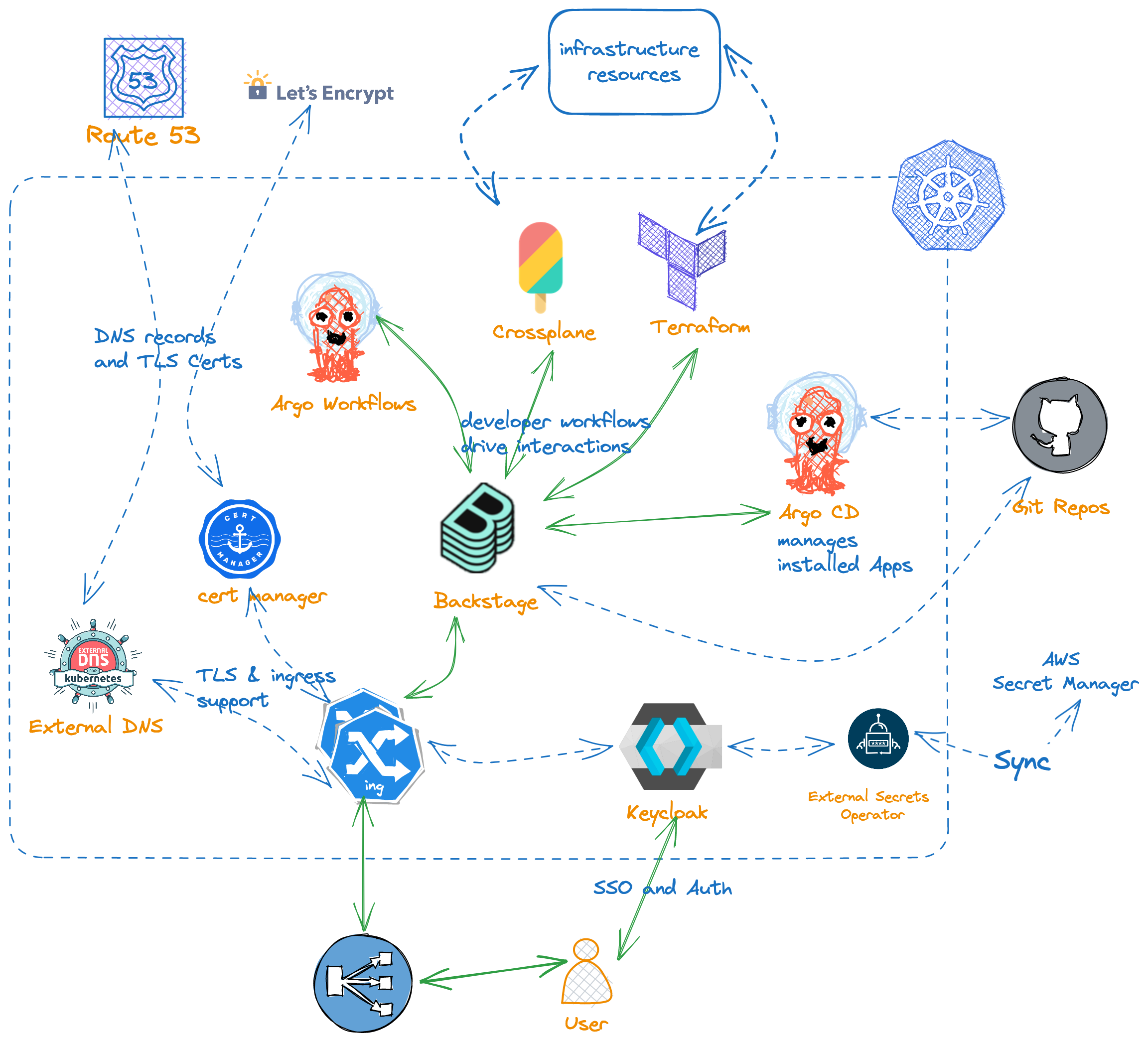

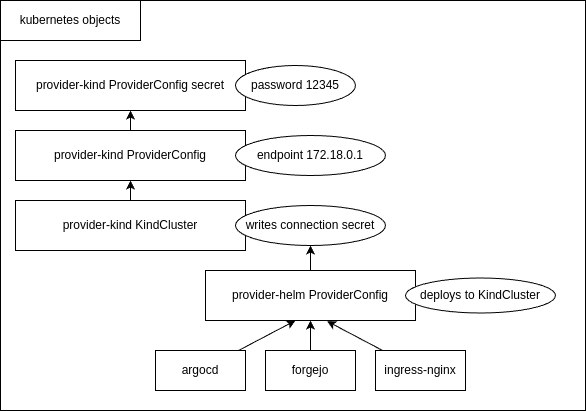

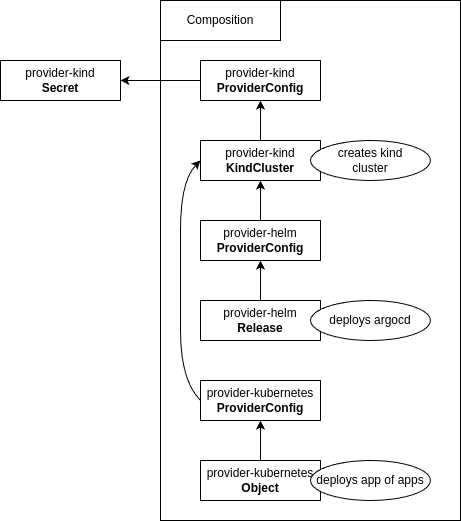

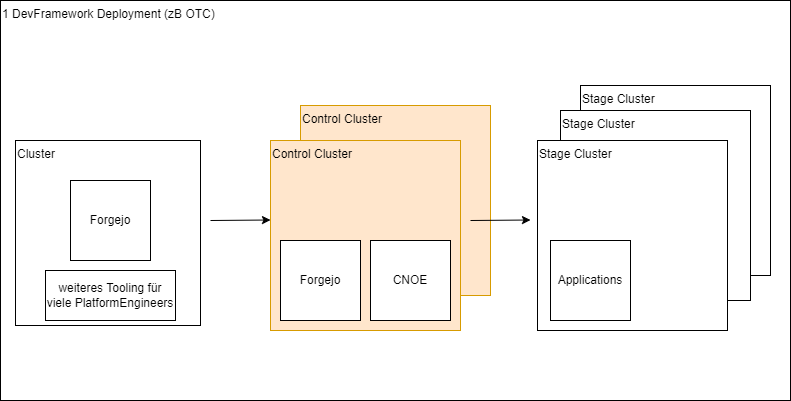

The next chart shows a system landscape of CNOE orchestration.

2024-04-PlatformEngineering-DevOpsDayRaleigh.pdf

Questions: What are the degrees of freedom in this chart? What variations with respect to environments and environmnent types exist?

The next chart shows a context chart of CNOE orchestration.

Questions: What are the degrees of freedom in this chart? What variations with respect to environments and environmnent types exist?

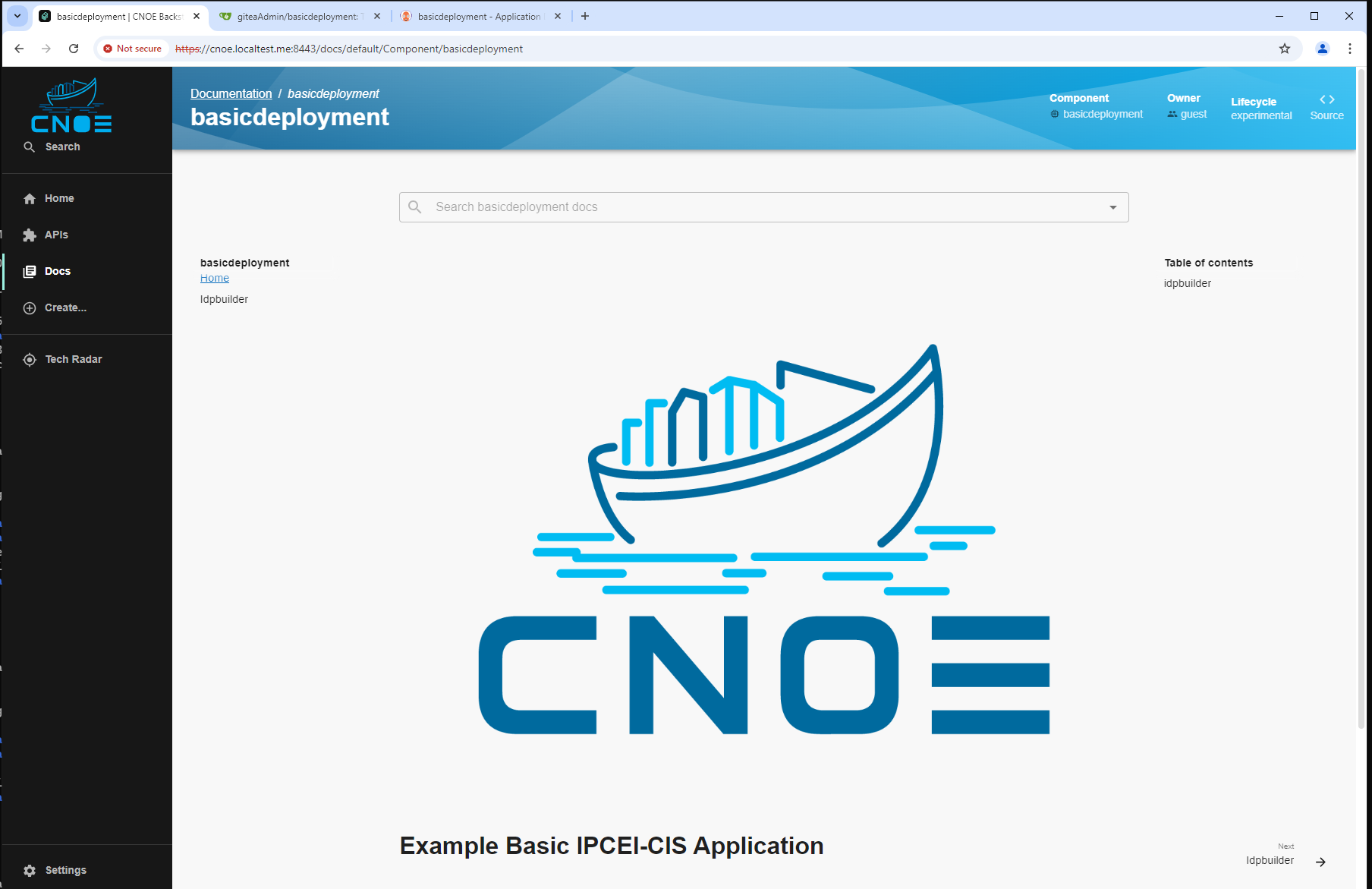

Backstage by Spotify can be seen as a Platform Portal. It is an open platform for building and managing internal developer tools, providing a unified interface for accessing various tools and resources within an organization.

Key Features of Backstage as a Platform Portal: Tool Integration:

Backstage allows for the integration of various tools used in the development process, such as CI/CD, version control systems, monitoring, and others, into a single interface. Service Management:

It offers the ability to register and manage services and microservices, as well as monitor their status and performance. Documentation and Learning Materials:

Backstage includes capabilities for storing and organizing documentation, making it easier for developers to access information. Golden Paths:

Backstage supports the concept of “Golden Paths,” enabling teams to follow recommended practices for development and tool usage. Modularity and Extensibility:

The platform allows for the creation of plugins, enabling users to customize and extend Backstage’s functionality to fit their organization’s needs. Backstage provides developers with centralized and convenient access to essential tools and resources, making it an effective solution for supporting Platform Engineering and developing an internal platform portal.

This document provides a comprehensive guide on the prerequisites and the process to set up and run Backstage locally on your machine.

Before you start, make sure you have the following installed on your machine:

Node.js: Backstage requires Node.js. You can download it from the Node.js website. It is recommended to use the LTS version.

Yarn: Backstage uses Yarn as its package manager. You can install it globally using npm:

npm install --global yarn

Git

Docker

To install the Backstage Standalone app, you can use npx. npx is a tool that comes preinstalled with Node.js and lets you run commands straight from npm or other registries.

npx @backstage/create-app@latest

This command will create a new directory with a Backstage app inside. The wizard will ask you for the name of the app. This name will be created as sub directory in your current working directory.

Below is a simplified layout of the files and folders generated when creating an app.

app

├── app-config.yaml

├── catalog-info.yaml

├── package.json

└── packages

├── app

└── backend

You can run it in Backstage root directory by executing this command:

yarn dev

Catalog:

Docs:

API Docs:

TechDocs:

Scaffolder:

CI/CD:

Metrics:

Snyk:

SonarQube:

GitHub:

Backstage plugins and functionality extensions should be writen in TypeScript/Node.js because backstage is written in those languages

Create the Plugin

To create a plugin in the project structure, you need to run the following command at the root of Backstage:

yarn new --select plugin

The wizard will ask you for the plugin ID, which will be its name. After that, a template for the plugin will be automatically created in the directory plugins/{plugin id}. After this install all needed dependencies. After this install required dependencies. In example case this is "axios" for API requests

Emaple:

yarn add axios

Define the Plugin’s Functionality

In the newly created plugin directory, focus on defining the plugin’s core functionality. This is where you will create components that handle the logic and user interface (UI) of the plugin. Place these components in the plugins/{plugin_id}/src/components/ folder, and if your plugin interacts with external data or APIs, manage those interactions within these components.

Set Up Routes

In the main configuration file of your plugin (typically plugins/{plugin_id}/src/routs.ts), set up the routes. Use createRouteRef() to define route references, and link them to the appropriate components in your plugins/{plugin_id}/src/components/ folder. Each route will determine which component renders for specific parts of the plugin.

Register the Plugin

Navigate to the packages/app folder and import your plugin into the main application. Register your plugin in the routs array within packages/app/src/App.tsx to integrate it into the Backstage system. It will create a rout for your’s plugin page

Add Plugin to the Sidebar Menu

To make the plugin accessible through the Backstage sidebar, modify the sidebar component in packages/app/src/components/Root.tsx. Add a new sidebar item linked to your plugin’s route reference, allowing users to easily access the plugin through the menu.

Test the Plugin

Run the Backstage development server using yarn dev and navigate to your plugin’s route via the sidebar or directly through its URL. Ensure that the plugin’s functionality works as expected.

All steps will be demonstrated using a simple example plugin, which will request JSON files from the API of jsonplaceholder.typicode.com and display them on a page.

Creating test-plugin:

yarn new --select plugin

Adding required dependencies. In this case only “axios” is needed for API requests

yarn add axios

Implement code of the plugin component in plugins/{plugin-id}/src/{Component name}/{filename}.tsx

import React, { useState } from 'react';

import axios from 'axios';

import { Typography, Grid } from '@material-ui/core';

import {

InfoCard,

Header,

Page,

Content,

ContentHeader,

SupportButton,

} from '@backstage/core-components';

export const TestComponent = () => {

const [posts, setPosts] = useState<any[]>([]);

const [loading, setLoading] = useState(false);

const [error, setError] = useState<string | null>(null);

const fetchPosts = async () => {

setLoading(true);

setError(null);

try {

const response = await axios.get('https://jsonplaceholder.typicode.com/posts');

setPosts(response.data);

} catch (err) {

setError('Ошибка при получении постов');

} finally {

setLoading(false);

}

};

return (

<Page themeId="tool">

<Header title="Welcome to the Test Plugin!" subtitle="This is a subtitle">

<SupportButton>A description of your plugin goes here.</SupportButton>

</Header>

<Content>

<ContentHeader title="Posts Section">

<SupportButton>

Click to load posts from the API.

</SupportButton>

</ContentHeader>

<Grid container spacing={3} direction="column">

<Grid item>

<InfoCard title="Information Card">

<Typography variant="body1">

This card contains information about the posts fetched from the API.

</Typography>

{loading && <Typography>Загрузка...</Typography>}

{error && <Typography color="error">{error}</Typography>}

{!loading && !posts.length && (

<button onClick={fetchPosts}>Request Posts</button>

)}

</InfoCard>

</Grid>

<Grid item>

{posts.length > 0 && (

<InfoCard title="Fetched Posts">

<ul>

{posts.map(post => (

<li key={post.id}>

<Typography variant="h6">{post.title}</Typography>

<Typography>{post.body}</Typography>

</li>

))}

</ul>

</InfoCard>

)}

</Grid>

</Grid>

</Content>

</Page>

);

};

Setup routs in plugins/{plugin_id}/src/routs.ts

import { createRouteRef } from '@backstage/core-plugin-api';

export const rootRouteRef = createRouteRef({

id: 'test-plugin',

});

packages/app/src/App.tsx in routes

Import of the plugin:import { TestPluginPage } from '@internal/backstage-plugin-test-plugin';

Adding route:

const routes = (

<FlatRoutes>

... //{Other Routs}

<Route path="/test-plugin" element={<TestPluginPage />} />

</FlatRoutes>

)

packages/app/src/components/Root/Root.tsx. This should be added in to Root object as another SidebarItemexport const Root = ({ children }: PropsWithChildren<{}>) => (

<SidebarPage>

<Sidebar>

... //{Other sidebar items}

<SidebarItem icon={ExtensionIcon} to="/test-plugin" text="Test Plugin" />

</Sidebar>

{children}

</SidebarPage>

);

yarn dev

Kratix is a Kubernetes-native framework that helps platform engineering teams automate the provisioning and management of infrastructure and services through custom-defined abstractions called Promises. It allows teams to extend Kubernetes functionality and provide resources in a self-service manner to developers, streamlining the delivery and management of workloads across environments.

Key concepts of Kratix:

Resource provisioning automation. Kratix simplifies infrastructure creation for developers through the abstraction of “Promises.” This means developers can simply request the necessary resources (like databases, message queues) without dealing with the intricacies of infrastructure management.

Flexibility and adaptability. Platform teams can customize and adapt Kratix to specific needs by creating custom Promises for various services, allowing the infrastructure to meet the specific requirements of the organization.

Unified resource request interface. Developers can use a single API (Kubernetes) to request resources, simplifying interaction with infrastructure and reducing complexity when working with different tools and systems.

Although Kratix offers great flexibility, it can also lead to more complex setup and platform management processes. Creating custom Promises and configuring their behavior requires time and effort.

Kubernetes dependency. Kratix relies on Kubernetes, which makes it less applicable in environments that don’t use Kubernetes or containerization technologies. It might also lead to integration challenges if an organization uses other solutions.

Limited ecosystem. Kratix doesn’t have as mature an ecosystem as some other infrastructure management solutions (e.g., Terraform, Pulumi). This may limit the availability of ready-made solutions and tools, increasing the amount of manual work when implementing Kratix.

Humanitec is an Internal Developer Platform (IDP) that helps platform engineering teams automate the provisioning and management of infrastructure and services through dynamic configuration and environment management.

It allows teams to extend their infrastructure capabilities and provide resources in a self-service manner to developers, streamlining the deployment and management of workloads across various environments.

Key concepts of Humanitec:

Application Definition:

This is an abstraction where developers define their application, including its services, environments, a dependencies. It abstracts away infrastructure details, allowing developers to focus on building and deploying their applications.

Dynamic Configuration Management:

Humanitec automatically manages the configuration of applications and services across multiple environments (e.g., development, staging, production). It ensures consistency and alignment of configurations as applications move through different stages of deployment.

Humanitec simplifies the development and delivery process by providing self-service deployment options while maintaining centralized governance and control for platform teams.

Resource provisioning automation. Humanitec automates infrastructure and environment provisioning, allowing developers to focus on building and deploying applications without worrying about manual configuration.

Dynamic environment management. Humanitec manages application configurations across different environments, ensuring consistency and reducing manual configuration errors.

Golden Paths. best-practice workflows and processes that guide developers through infrastructure provisioning and application deployment. This ensures consistency and reduces cognitive load by providing a set of recommended practices.

Unified resource management interface. Developers can use Humanitec’s interface to request resources and deploy applications, reducing complexity and improving the development workflow.

Humanitec is commercially licensed software

Integration challenges. Humanitec’s dependency on specific cloud-native environments can create challenges for organizations with diverse infrastructures or those using legacy systems.

Cost. Depending on usage, Humanitec might introduce additional costs related to the implementation of an Internal Developer Platform, especially for smaller teams.

Harder to customise

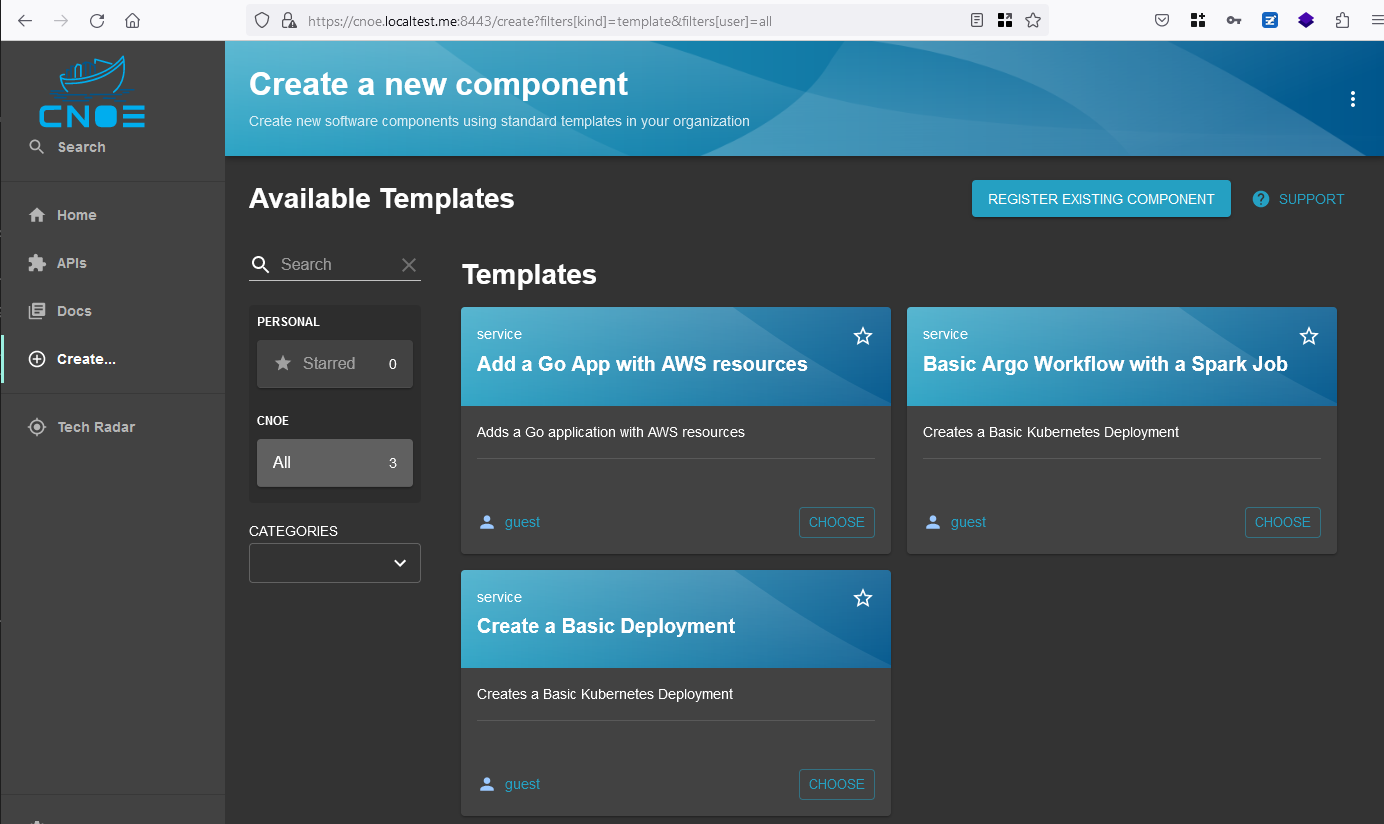

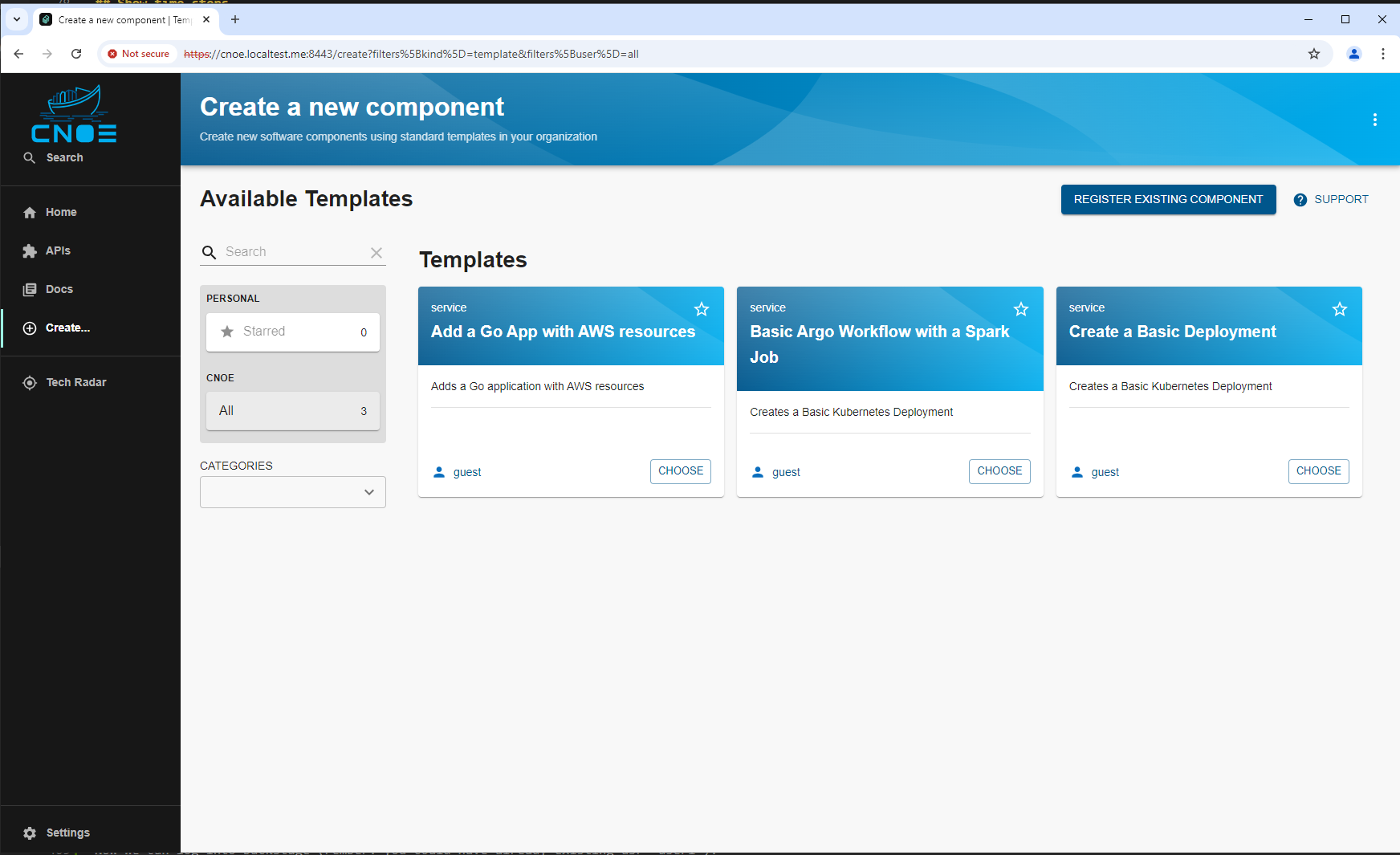

This Backstage template YAML automates the creation of an Argo Workflow for Kubernetes that includes a basic Spark job, providing a convenient way to configure and deploy workflows involving data processing or machine learning jobs. Users can define key parameters, such as the application name and the path to the main Spark application file. The template creates necessary Kubernetes resources, publishes the application code to a Gitea Git repository, registers the application in the Backstage catalog, and deploys it via ArgoCD for easy CI/CD management.

This template is designed for teams that need a streamlined approach to deploy and manage data processing or machine learning jobs using Spark within an Argo Workflow environment. It simplifies the deployment process and integrates the application with a CI/CD pipeline. The template performs the following:

This template boosts productivity by automating steps required for setting up Argo Workflows and Spark jobs, integrating version control, and enabling centralized management and visibility, making it ideal for projects requiring efficient deployment and scalable data processing solutions.

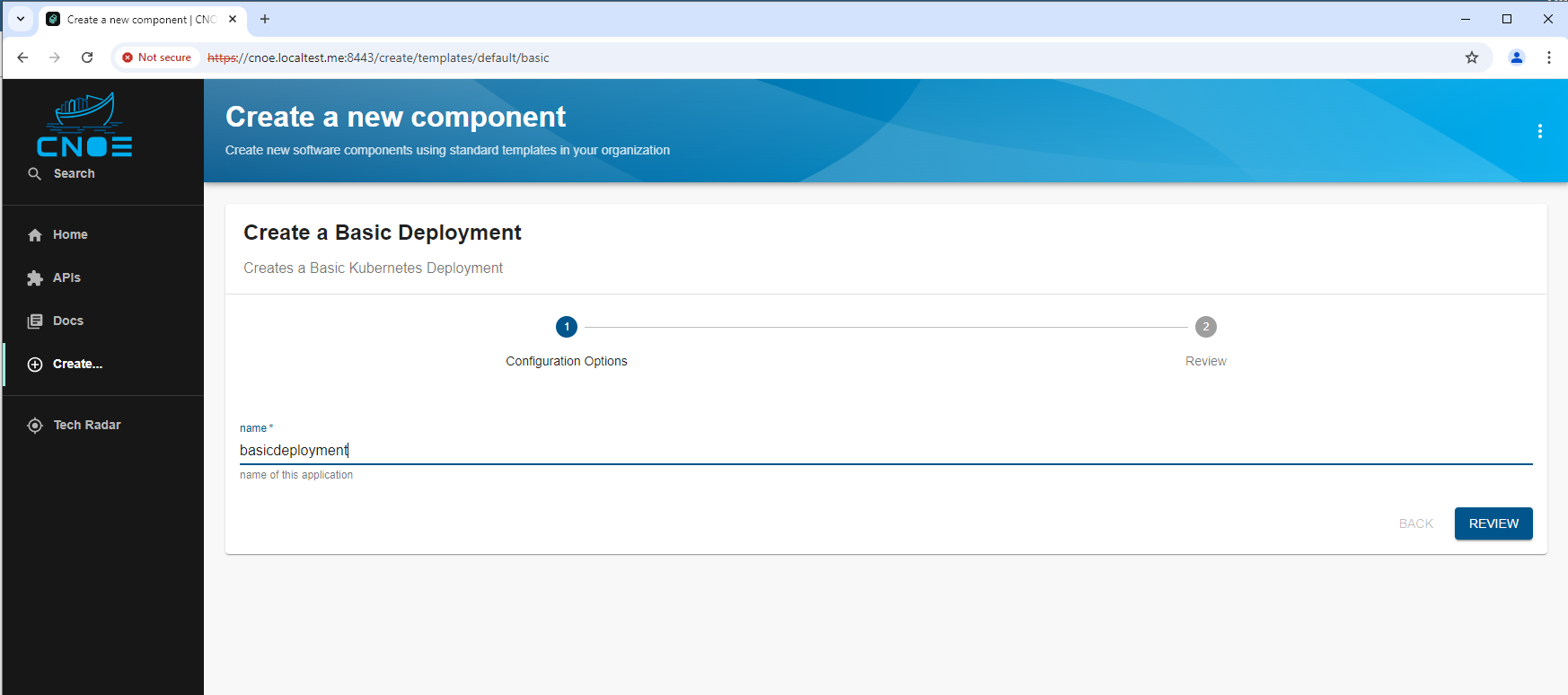

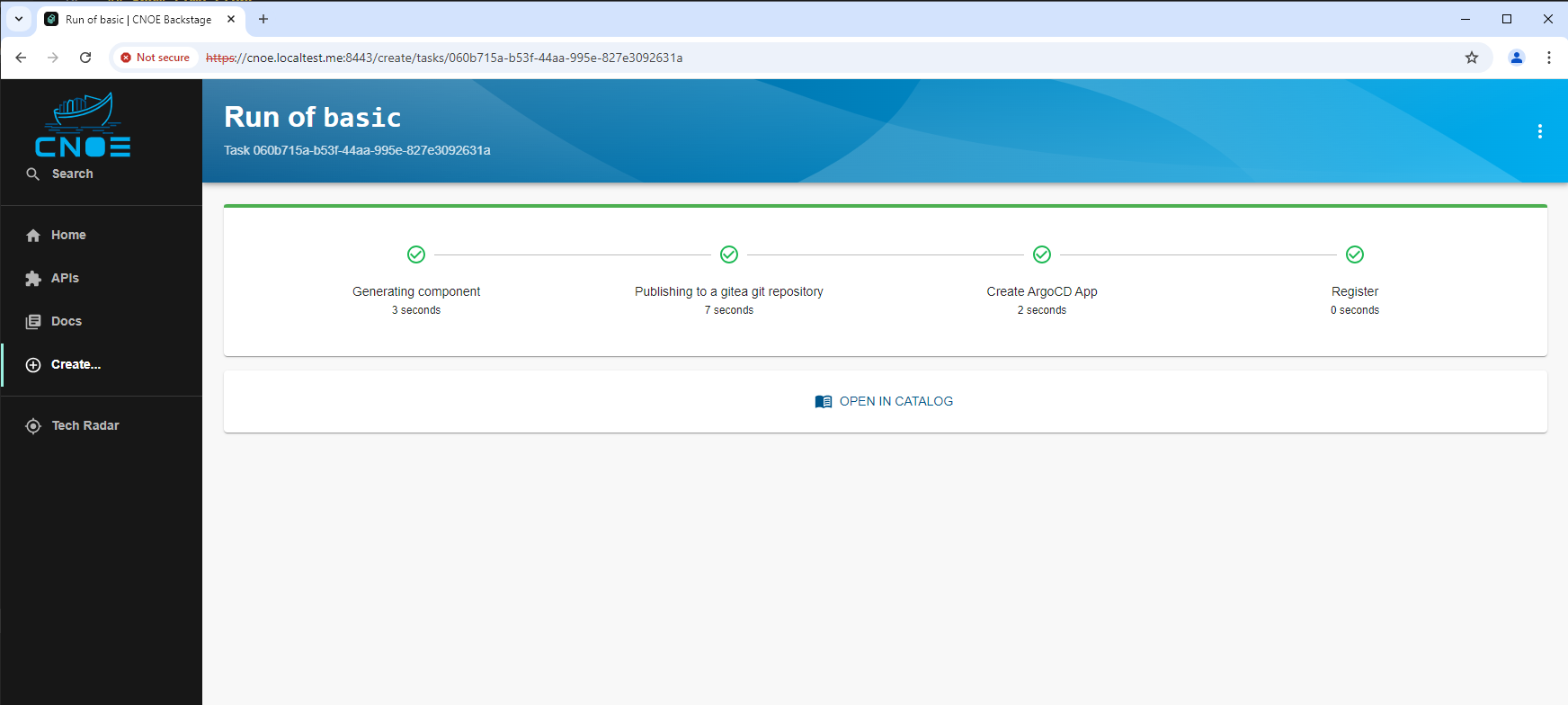

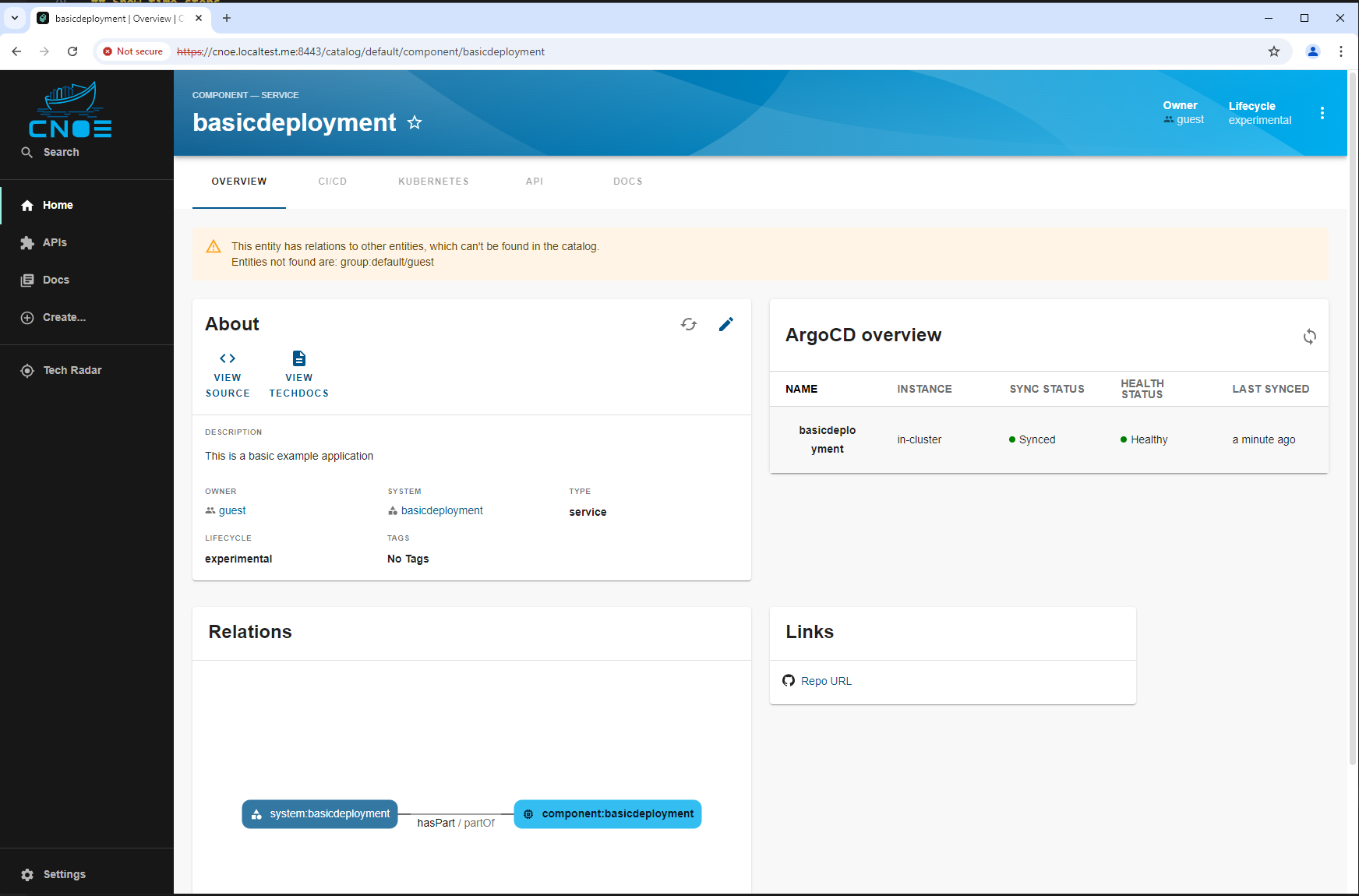

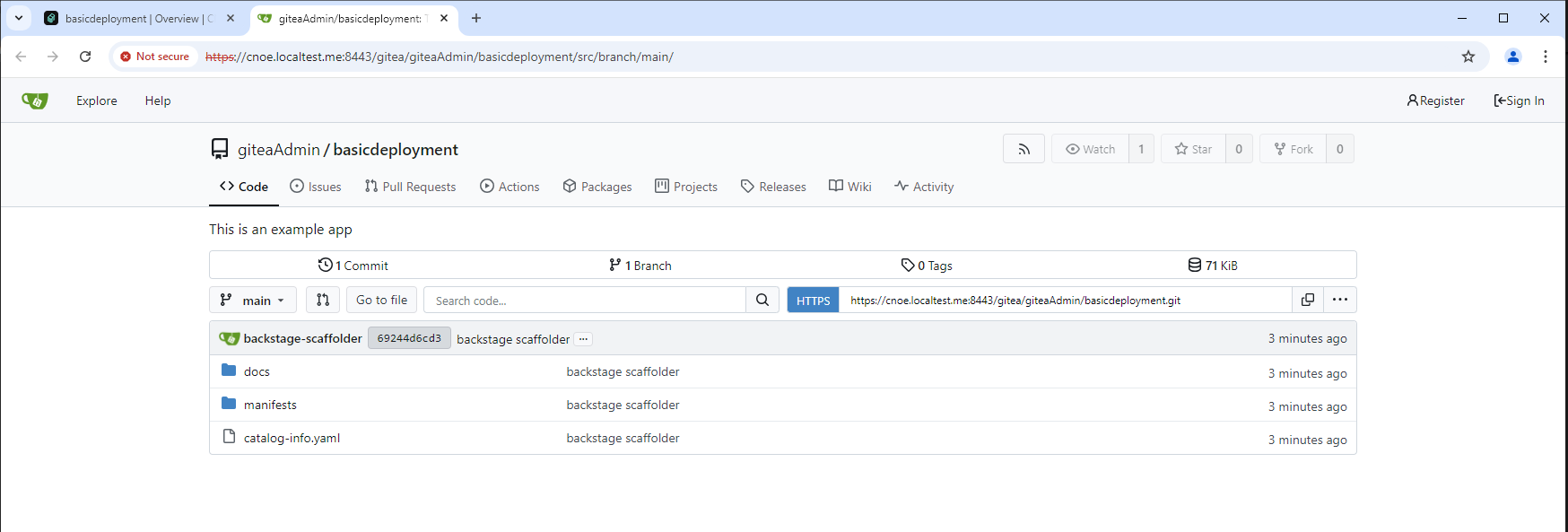

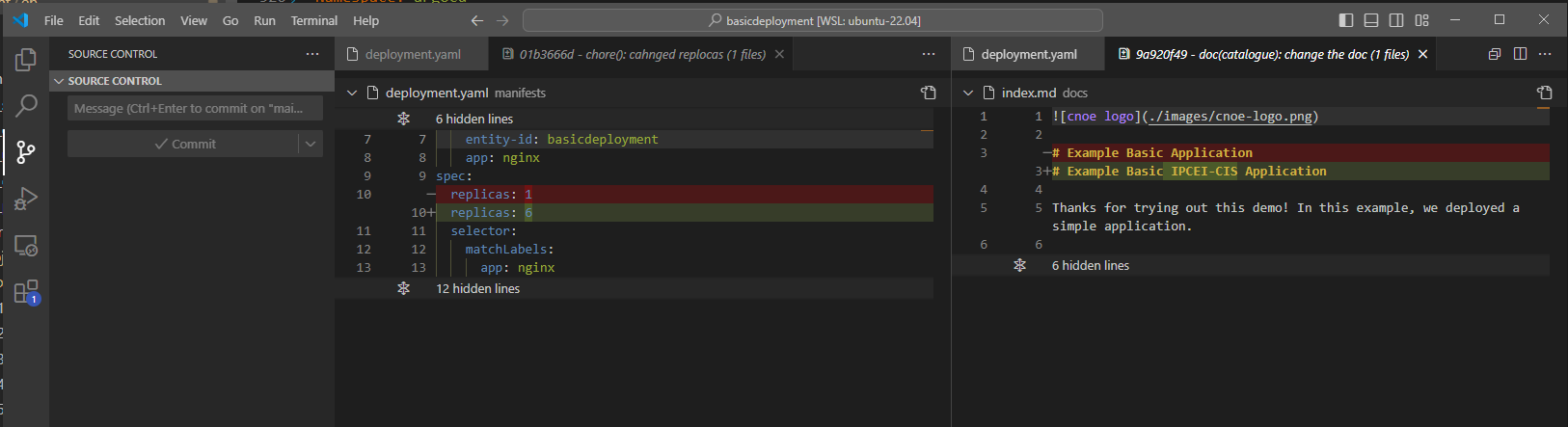

This Backstage template YAML automates the creation of a basic Kubernetes Deployment, aimed at simplifying the deployment and management of applications in Kubernetes for the user. The template allows users to define essential parameters, such as the application’s name, and then creates and configures the Kubernetes resources, publishes the application code to a Gitea Git repository, and registers the application in the Backstage catalog for tracking and management.

The template is designed for teams needing a streamlined approach to deploy applications in Kubernetes while automatically configuring their CI/CD pipelines. It performs the following:

This template enhances productivity by automating several steps required for deployment, version control, and registration, making it ideal for projects where fast, consistent deployment and centralized management are required.

The idpbuilder uses KIND as Kubernetes cluster. It is suggested to use a virtual machine for the installation. MMS Linux clients are unable to execute KIND natively on the local machine because of network problems. Pods for example can’t connect to the internet.

Windows and Mac users already utilize a virtual machine for the Docker Linux environment.

For building idpbuilder the source code needs to be downloaded and compiled:

git clone https://github.com/cnoe-io/idpbuilder.git

cd idpbuilder

go build

The idpbuilder binary will be created in the current directory.

To start the idpbuilder binary execute the following command:

./idpbuilder create --use-path-routing --log-level debug --package https://github.com/cnoe-io/stacks//ref-implementation

At the end of the idpbuilder execution a link of the installed ArgoCD is shown. The credentianls for access can be obtained by executing:

./idpbuilder get secrets

A Kubernetes config is created in the default location $HOME/.kube/config. A management of the Kubernetes config is recommended to not unintentionally delete acces to other clusters like the OSC.

To show all running KIND nodes execute:

kubectl get nodes -o wide

To see all running pods:

kubectl get pods -o wide

Follow this documentation: https://github.com/cnoe-io/stacks/tree/main/ref-implementation

The cluster can be deleted by executing:

idpbuilder delete cluster

CNOE provides two implementations of an IDP:

Both are not useable to run on bare metal or an OSC instance. The Amazon implementation is complex and makes use of Terraform which is currently not supported by either base metal or OSC. Therefore the KIND implementation is used and customized to support the idpbuilder installation. The idpbuilder is also doing some network magic which needs to be replicated.

Several prerequisites have to be provided to support the idpbuilder on bare metal or the OSC:

Talos Linux is choosen for a bare metal Kubernetes instance.

As soon as the idpbuilder works correctly on bare metal, the next step is to apply it to an OSC instance.

Append this lines to /etc/hosts

127.0.0.1 gitea.cnoe.localtest.me

127.0.0.1 cnoe.localtest.me

Install nginx by executing:

sudo apt install nginx

Replace /etc/nginx/sites-enabled/default with the following content:

server {

listen 8443 ssl default_server;

listen [::]:8443 ssl default_server;

include snippets/snakeoil.conf;

location / {

proxy_pass http://10.5.0.20:80;

proxy_http_version 1.1;

proxy_cache_bypass $http_upgrade;

proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-Host $host;

proxy_set_header X-Forwarded-Proto $scheme;

}

}

Start nginx by executing:

sudo systemctl enable nginx

sudo systemctl restart nginx

For building idpbuilder the source code needs to be downloaded and compiled:

git clone https://github.com/cnoe-io/idpbuilder.git

cd idpbuilder

go build

The idpbuilder binary will be created in the current directory.

Open the idpbuilder folder in VS Code:

code .

Create a new launch setting. Add the "args" parameter to the launch setting:

{

"version": "0.2.0",

"configurations": [

{

"name": "Launch Package",

"type": "go",

"request": "launch",

"mode": "auto",

"program": "${fileDirname}",

"args": ["create", "--use-path-routing", "--package", "https://github.com/cnoe-io/stacks//ref-implementation"]

}

]

}

Talos by default will create docker containers, similar to KIND. Create the cluster by executing:

talosctl cluster create

mkdir -p localpathprovisioning

cd localpathprovisioning

cat > localpathprovisioning.yaml <<EOF

apiVersion: kustomize.config.k8s.io/v1beta1

kind: Kustomization

resources:

- github.com/rancher/local-path-provisioner/deploy?ref=v0.0.26

patches:

- patch: |-

kind: ConfigMap

apiVersion: v1

metadata:

name: local-path-config

namespace: local-path-storage

data:

config.json: |-

{

"nodePathMap":[

{

"node":"DEFAULT_PATH_FOR_NON_LISTED_NODES",

"paths":["/var/local-path-provisioner"]

}

]

}

- patch: |-

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: local-path

annotations:

storageclass.kubernetes.io/is-default-class: "true"

- patch: |-

apiVersion: v1

kind: Namespace

metadata:

name: local-path-storage

labels:

pod-security.kubernetes.io/enforce: privileged

EOF

kustomize build | kubectl apply -f -

rm localpathprovisioning.yaml kustomization.yaml

cd ..

rmdir localpathprovisioning

kubectl apply -f https://raw.githubusercontent.com/metallb/metallb/v0.14.8/config/manifests/metallb-native.yaml

sleep 50

cat <<EOF | kubectl apply -f -

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: first-pool

namespace: metallb-system

spec:

addresses:

- 10.5.0.20-10.5.0.130

EOF

cat <<EOF | kubectl apply -f -

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: homelab-l2

namespace: metallb-system

spec:

ipAddressPools:

- first-pool

EOF

helm upgrade --install ingress-nginx ingress-nginx \

--repo https://kubernetes.github.io/ingress-nginx \

--namespace ingress-nginx --create-namespace

sleep 30

Edit the function Run in pkg/build/build.go and comment out the creation of the KIND cluster:

/*setupLog.Info("Creating kind cluster")

if err := b.ReconcileKindCluster(ctx, recreateCluster); err != nil {

return err

}*/

Compile the idpbuilder

go build

Then, in VS Code, switch to main.go in the root directory of the idpbuilder and start debugging.

At the end of the idpbuilder execution a link of the installed ArgoCD is shown. The credentianls for access can be obtained by executing:

./idpbuilder get secrets

A Kubernetes config is created in the default location $HOME/.kube/config. A management of the Kubernetes config is recommended to not unintentionally delete acces to other clusters like the OSC.

To show all running Talos nodes execute:

kubectl get nodes -o wide

To see all running pods:

kubectl get pods -o wide

The cluster can be deleted by executing:

talosctl cluster destroy

Required:

Add *.cnoe.localtest.me to the Talos cluster DNS, pointing to the host device IP address, which runs nginx.

Create a SSL certificate with cnoe.localtest.me as common name. Edit the nginx config to load this certificate. Configure idpbuilder to distribute this certificate instead of the one idpbuilder distributes by idefault.

Optimizations:

Implement an idpbuilder uninstall. This is specially important when working on the OSC instance.

Remove or configure gitea.cnoe.localtest.me, it seems not to work even in the idpbuilder local installation with KIND.

Improvements to the idpbuilder to support Kubernetes instances other then KIND. This can either be done by parametrization or by utilizing Terraform / OpenTOFU or Crossplane.

The idpbuilder supports creating platforms using either path based or subdomain based routing:

idpbuilder create --log-level debug --package https://github.com/cnoe-io/stacks//ref-implementation

idpbuilder create --use-path-routing --log-level debug --package https://github.com/cnoe-io/stacks//ref-implementation

However, even though argo does report all deployments as green eventually, not the entire demo is actually functional (verification?). This is due to hardcoded values that for example point to the path-routed location of gitea to access git repos. Thus, backstage might not be able to access them.

Within the demo / ref-implementation, a simple search & replace is suggested to change urls to fit the given environment. But proper scripting/templating could take care of that as the hostnames and necessary properties should be available. This is, however, a tedious and repetitive task one has to keep in mind throughout the entire system, which might lead to an explosion of config options in the future. Code that addresses correct routing is located in both the stack templates and the idpbuilder code.

For the most part, components communicate with either the cluster API using the default DNS or with each other via http(s) using the public DNS/hostname (+ path-routing scheme). The latter is necessary due to configs that are visible and modifiable by users. This includes for example argocd config for components that has to sync to a gitea git repo. Using the same URL for internal and external resolution is imperative.

The idpbuilder achieves transparent internal DNS resolution by overriding the public DNS name in the cluster’s internal DNS server (coreDNS). Subsequently, within the cluster requests to the public hostnames resolve to the IP of the internal ingress controller service. Thus, internal and external requests take a similar path and run through proper routing (rewrites, ssl/tls, etc).

One has to keep in mind that some specific app features might not work properly or without haxx when using path based routing (e.g. docker registry in gitea). Futhermore, supporting multiple setup strategies will become cumbersome as the platforms grows. We should probably only support one type of setup to keep the system as simple as possible, but allow modification if necessary.

DNS solutions like nip.io or the already used localtest.me mitigate the

need for path based routing

HTTP is a cornerstone of the internet due to its high flexibility. Starting

from HTTP/1.1 each request in the protocol contains among others a path and a

Hostname in its header. While an HTTP request is sent to a single IP address

/ server, these two pieces of data allow (distributed) systems to handle

requests in various ways.

$ curl -v http://google.com/something > /dev/null

* Connected to google.com (2a00:1450:4001:82f::200e) port 80

* using HTTP/1.x

> GET /something HTTP/1.1

> Host: google.com

> User-Agent: curl/8.10.1

> Accept: */*

...

Imagine requesting http://myhost.foo/some/file.html, in a simple setup, the

web server myhost.foo resolves to would serve static files from some

directory, /<some_dir>/some/file.html.

In more complex systems, one might have multiple services that fulfill various roles, for example a service that generates HTML sites of articles from a CMS and a service that can convert images into various formats. Using path-routing both services are available on the same host from a user’s POV.

An article served from http://myhost.foo/articles/news1.html would be

generated from the article service and points to an image

http://myhost.foo/images/pic.jpg which in turn is generated by the image

converter service. When a user sends an HTTP request to myhost.foo, they hit

a reverse proxy which forwards the request based on the requested path to some

other system, waits for a response, and subsequently returns that response to

the user.

Such a setup hides the complexity from the user and allows the creation of large distributed, scalable systems acting as a unified entity from the outside. Since everything is served on the same host, the browser is inclined to trust all downstream services. This allows for easier ‘communication’ between services through the browser. For example, cookies could be valid for the entire host and thus authentication data could be forwarded to requested downstream services without the user having to explicitly re-authenticate.

Furthermore, services ‘know’ their user-facing location by knowing their path

and the paths to other services as paths are usually set as a convention and /

or hard-coded. In practice, this makes configuration of the entire system

somewhat easier, especially if you have various environments for testing,

development, and production. The hostname of the system does not matter as one

can use hostname-relative URLs, e.g. /some/service.

Load balancing is also easily achievable by multiplying the number of service instances. Most reverse proxy systems are able to apply various load balancing strategies to forward traffic to downstream systems.

Problems might arise if downstream systems are not built with path-routing in mind. Some systems require to be served from the root of a domain, see for example the container registry spec.

Each downstream service in a distributed system is served from a different

host, typically a subdomain, e.g. serviceA.myhost.foo and

serviceB.myhost.foo. This gives services full control over their respective

host, and even allows them to do path-routing within each system. Moreover,

hostname-routing allows the entire system to create more flexible and powerful

routing schemes in terms of scalability. Intra-system communication becomes

somewhat harder as the browser treats each subdomain as a separate host,

shielding cookies for example form one another.

Each host that serves some services requires a DNS entry that has to be published to the clients (from some DNS server). Depending on the environment this can become quite tedious as DNS resolution on the internet and intranets might have to deviate. This applies to intra-cluster communication as well, as seen with the idpbuilder’s platform. In this case, external DNS resolution has to be replicated within the cluster to be able to use the same URLs to address for example gitea.

The following example depicts DNS-only routing. By defining separate DNS entries for each service / subdomain requests are resolved to the respective servers. In theory, no additional infrastructure is necessary to route user traffic to each service. However, as services are completely separated other infrastructure like authentication possibly has to be duplicated.

When using hostname based routing, one does not have to set different IPs for

each hostname. Instead, having multiple DNS entries pointing to the same set of

IPs allows re-using existing infrastructure. As shown below, a reverse proxy is

able to forward requests to downstream services based on the Host request

parameter. This way specific hostname can be forwarded to a defined service.

At the same time, one could imagine a multi-tenant system that differentiates

customer systems by name, e.g. tenant-1.cool.system and

tenant-2.cool.system. Configured as a wildcard-sytle domain, *.cool.system

could point to a reverse proxy that forwards requests to a tenants instance of

a system, allowing re-use of central infrastructure while still hosting

separate systems per tenant.

The implicit dependency on DNS resolution generally makes this kind of routing

more complex and error-prone as changes to DNS server entries are not always

possible or modifiable by everyone. Also, local changes to your /etc/hosts

file are a constant pain and should be seen as a dirty hack. As mentioned

above, dynamic DNS solutions like nip.io are often helpful in this case.

Path and hostname based routing are the two most common methods of HTTP traffic

routing. They can be used separately but more often they are used in

conjunction. Due to HTTP’s versatility other forms of HTTP routing, for example

based on the Content-Type Header are also very common.

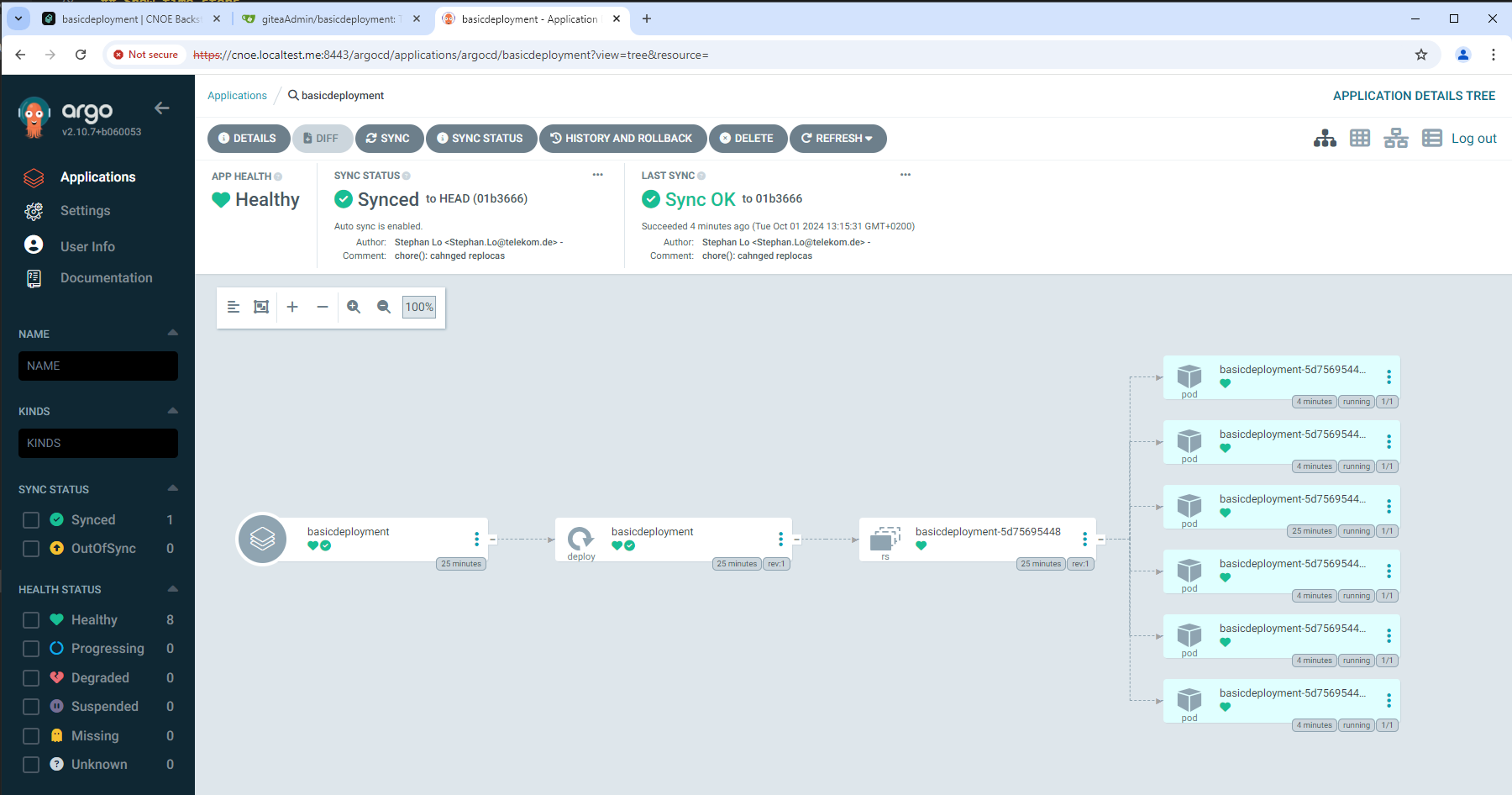

ArgoCD is a Continuous Delivery tool for kubernetes based on GitOps principles.

ELI5: ArgoCD is an application running in kubernetes which monitors Git repositories containing some sort of kubernetes manifests and automatically deploys them to some configured kubernetes clusters.

From ArgoCD’s perspective, applications are defined as custom resource definitions within the kubernetes clusters that ArgoCD monitors. Such a definition describes a source git repository that contains kubernetes manifests, in the form of a helm chart, kustomize, jsonnet definitions or plain yaml files, as well as a target kubernetes cluster and namespace the manifests should be applied to. Thus, ArgoCD is capable of deploying applications to various (remote) clusters and namespaces.

ArgoCD monitors both the source and the destination. It applies changes from

the git repository that acts as the source of truth for the destination as soon

as they occur, i.e. if a change was pushed to the git repository, the change is

applied to the kubernetes destination by ArgoCD. Subsequently, it checks

whether the desired state was established. For example, it verifies that all

resources were created, enough replicas started, and that all pods are in the

running state and healthy.

An ArgoCD deployment mainly consists of 3 main components:

The application controller is a kubernetes operator that synchronizes the live state within a kubernetes cluster with the desired state derived from the git sources. It monitors the live state, can detect derivations, and perform corrective actions. Additionally, it can execute hooks on life cycle stages such as pre- and post-sync.

The repository server interacts with git repositories and caches their state, to reduce the amount of polling necessary. Furthermore, it is responsible for generating the kubernetes manifests from the resources within the git repositories, i.e. executing helm or jsonnet templates.

The API Server is a REST/gRPC Service that allows the Web UI and CLI, as well as other API clients, to interact with the system. It also acts as the callback for webhooks particularly from Git repository platforms such as GitHub or Gitlab to reduce repository polling.

The system primarily stores its configuration as kubernetes resources. Thus, other external storage is not vital.

ArgoCD is one of the core components besides gitea/forgejo that is being bootstrapped by the idpbuilder. Future project creation, e.g. through backstage, relies on the availability of ArgoCD.

After the initial bootstrapping phase, effectively all components in the stack that are deployed in kubernetes are managed by ArgoCD. This includes the bootstrapped components of gitea and ArgoCD which are onboarded afterward. Thus, the idpbuilder is only necessary in the bootstrapping phase of the platform and the technical coordination of all components shifts to ArgoCD eventually.

In general, the creation of new projects and applications should take place in backstop. It is a catalog of software components and best practices that allows developers to grasp and to manage their software portfolio. Underneath, however, the deployment of applications and platform components is managed by ArgoCD. Among others, backstage creates Application CRDs to instruct ArgoCD to manage deployments and subsequently report on their current state.

Initially shamelessly copied from the docs

The CNOE docs do somewhat interchange validation and verification but for the most part they adhere to the general definition:

Validation is used when you check your approach before actually executing an action.

Examples:

Verification describes testing if your ’thing’ complies with your spec

Examples:

It seems that both validation and verification within the CNOE framework are not actually handled by some explicit component but should be addressed throughout the system and workflows.

As stated in the docs, validation takes place in all parts of the stack by enforcing strict API usage and policies (signing, mitigations, security scans etc, see usage of kyverno for example), and using code generation (proven code), linting, formatting, LSP. Consequently, validation of source code, templates, etc is more a best practice rather than a hard fact or feature and it is up to the user to incorporate them into their workflows and pipelines. This is probably due to the complexity of the entire stack and the individual properties of each component and applications.

Verification of artifacts and deployments actually exists in a somewhat similar state. The current CNOE reference-implementation does not provide sufficient verification tooling.

However, as stated in the docs

within the framework cnoe-cli is capable of extremely limited verification of

artifacts within kubernetes. The same verification is also available as a step

within a backstage

plugin. This is pretty

much just a wrapper of the cli tool. The tool consumes CRD-like structures

defining the state of pods and CRDs and checks for their existence within a

live cluster (example).

Depending on the aspiration of ‘verification’ this check is rather superficial and might only suffice as an initial smoke test. Furthermore, it seems like the feature is not actually used within the CNOE stacks repo.

For a live product more in depth verification tools and schemes are necessary to verify the correct configuration and authenticity of workloads, which is, in the context of traditional cloud systems, only achievable to a limited degree.

Existing tools within the stack, e.g. Argo, provide some verification capabilities. But further investigation into the general topic is necessary.

To support local development and usage of crossplane compositions, a crossplane provider is needed. Every big hyperscaler already has support in crossplane (e.g. provider-gcp and provider-aws).

Each provider has two main parts, the provider config and implementations of the cloud resources.

The provider config takes the credentials to log into the cloud provider and provides a token (e.g. a kube config or even a service account) that the implementations can use to provision cloud resources.

The implementations of the cloud resources reflect each type of cloud resource, typical resources are:

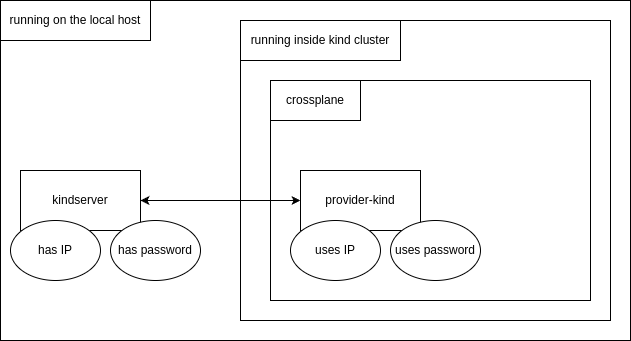

To have the crossplane concepts applied, the provider-kind consists of two components: kindserver and provider-kind.

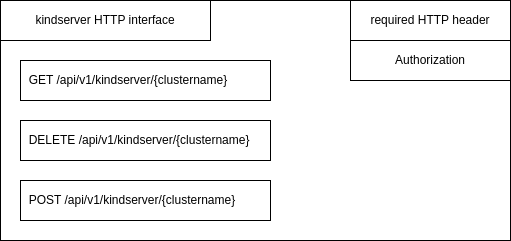

The kindserver is used to manage local kind clusters. It provides an HTTP REST interface to create, delete and get informations of a running cluster, using an Authorization HTTP header field used as a password:

The two properties to connect the provider-kind to kindserver are the IP address and password of kindserver. The IP address is required because the kindserver needs to be executed outside the kind cluster, directly on the local machine, as it need to control kind itself:

The provider-kind provides two crossplane elements, the ProviderConfig and KindCluster as the (only) cloud resource. The

ProviderConfig is configured with the IP address and password of the running kindserver. The KindCluster type is configured

to use the provided ProviderConfig. Kind clusters can be managed by adding and removing kubernetes manifests of type

KindCluster. The crossplane reconcilation loop makes use of the kindserver HTTP GET method to see if a new cluster needs to be

created by HTTP POST or being removed by HTTP DELETE.

The password used by ProviderConfig is configured as an kubernetes secret, while the kindserver IP address is configured

inside the ProviderConfig as the field endpoint.

When provider-kind created a new cluster by processing a KindCluster manifest, the two providers which are used to deploy applications, provider-helm and provider-kubernetes, can be configured to use the KindCluster.

A Crossplane composition can be created by concaternating different providers and their objects. A composition is managed as a custom resource definition and defined in a single file.

Two kubernetes manifests are defines by provider-kind: ProviderConfig and KindCluster. The third needed kubernetes

object is a secret.

The need for the following inputs arise when developing a provider-kind:

ProviderConfigKindClusterThe following outputs arise:

KindClusterKindClusterKindClusterKindClusterThe kindserver password needs to be defined first. It is realized as a kubernetes secret and contains the password which the kindserver has been configured with:

apiVersion: v1

data:

credentials: MTIzNDU=

kind: Secret

metadata:

name: kind-provider-secret

namespace: crossplane-system

type: Opaque

The IP address of the kindserver endpoint is configured in the provider-kind ProviderConfig. This config also references the kindserver password (kind-provider-secret):

apiVersion: kind.crossplane.io/v1alpha1

kind: ProviderConfig

metadata:

name: kind-provider-config

spec:

credentials:

source: Secret

secretRef:

namespace: crossplane-system

name: kind-provider-secret

key: credentials

endpoint:

url: https://172.18.0.1:7443/api/v1/kindserver

It is suggested that the kindserver runs on the IP of the docker host, so that all kind clusters can access it without extra routing.

The kind config is provided as the field kindConfig in each KindCluster manifest. The manifest also references the provider-kind ProviderConfig (kind-provider-config in the providerConfigRef field):

apiVersion: container.kind.crossplane.io/v1alpha1

kind: KindCluster

metadata:

name: example-kind-cluster

spec:

forProvider:

kindConfig: |

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

nodes:

- role: control-plane

kubeadmConfigPatches:

- |

kind: InitConfiguration

nodeRegistration:

kubeletExtraArgs:

node-labels: "ingress-ready=true"

extraPortMappings:

- containerPort: 80

hostPort: 80

protocol: TCP

- containerPort: 443

hostPort: 443

protocol: TCP

containerdConfigPatches:

- |-

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."gitea.cnoe.localtest.me:443"]

endpoint = ["https://gitea.cnoe.localtest.me"]

[plugins."io.containerd.grpc.v1.cri".registry.configs."gitea.cnoe.localtest.me".tls]

insecure_skip_verify = true

providerConfigRef:

name: kind-provider-config

writeConnectionSecretToRef:

namespace: default

name: kind-connection-secret

After the kind cluster has been created, it’s kube config is stored in a kubernetes secret kind-connection-secret which writeConnectionSecretToRef references.

The three outputs can be recieved by getting the KindCluster manifest after the cluster has been created. The KindCluster is

available for reading even before the cluster has been created, but the three outputfields are empty until then. The ready state

will also switch from false to true after the cluster has finally been created.

This fields can be recieved with a standard kubectl get command:

$ kubectl get kindclusters kindcluster-fw252 -o yaml

...

status:

atProvider:

internalIP: 192.168.199.19

kubernetesVersion: v1.31.0

conditions:

- lastTransitionTime: "2024-11-12T18:22:39Z"

reason: Available

status: "True"

type: Ready

- lastTransitionTime: "2024-11-12T18:21:38Z"

reason: ReconcileSuccess

status: "True"

type: Synced

The kube config is stored in a kubernetes secret (kind-connection-secret) which can be accessed after the cluster has been

created:

$ kubectl get kindclusters kindcluster-fw252 -o yaml

...

writeConnectionSecretToRef:

name: kind-connection-secret

namespace: default

...

$ kubectl get secret kind-connection-secret

NAME TYPE DATA AGE

kind-connection-secret connection.crossplane.io/v1alpha1 2 107m

The API endpoint of the new cluster endpoint and it’s kube config kubeconfig is stored in that secret. This values are set in

the Obbserve function of the kind controller of provider-kind. They are set with the special crossplane function managed

ExternalObservation.

The reconciler loop is the heart of every crossplane provider. As it is coupled async, it’s best to describe it working in words:

Internally, the Connect function get’s triggered in the kindcluster controller internal/controller/kindcluster/kindcluster.go

first, to setup the provider and configure it with the kindserver password and IP address of the kindserver.

After that the provider-kind has been configured with the kindserver secret and it’s ProviderConfig, the provider is ready to

be activated by applying a KindCluster manifest to kubernetes.

When the user applies a new KindCluster manifest, a observe loop is started. The provider regulary triggers the Observe

function of the controller. As there has yet been created nothing yet, the controller will return

managed.ExternalObservation{ResourceExists: false} to signal that the kind cluster resource has not been created yet.

As the is a kindserver SDK available, the controller is using the Get function of the SDK to query the kindserver.

The KindCluster is already applied and can be retrieved with kubectl get kindclusters. As the cluster has not been

created yet, it readiness state is false.

In parallel, the Create function is triggered in the controller. This function has acces to the desired kind config

cr.Spec.ForProvider.KindConfig and the name of the kind cluster cr.ObjectMeta.Name. It can now call the kindserver SDK to

create a new cluster with the given config and name. The create function is supposed not to run too long, therefore

it directly returns in the case of provider-kind. The kindserver already knows the name of the new cluster and even it is

not yet ready, it will respond with a partial success.

The observe loops is triggered regulary in parallel. It will be triggered after the create call but before the kind cluster has been created. Now it will get a step further. It gets the information of kindserver, that the cluster is already knows, but not finished creating yet.

After the cluster has been finished creating, the kindserver has all important informations for the provider-kind. That is The API server endpoint of the new cluster and it’s kube config. After another round of the observer loop, the controller gets now the full set of information of kindcluster (cluster ready, it’s API server endpoint and it’s kube config). When this informations has been recieved by the kindserver SDk in form of a JSON file, it is able to signal successfull creating of the cluster. That is done by returning the following structure from inside the observe function:

return managed.ExternalObservation{

ResourceExists: true,

ResourceUpToDate: true,

ConnectionDetails: managed.ConnectionDetails{

xpv1.ResourceCredentialsSecretEndpointKey: []byte(clusterInfo.Endpoint),

xpv1.ResourceCredentialsSecretKubeconfigKey: []byte(clusterInfo.KubeConfig),

},

}, nil

Note that the managed.ConnectionDetails will automatically write the API server endpoint and it’s kube config to the kubernetes

secret which writeConnectionSecretToRefof KindCluster points to.

It also set the availability flag before returning, that will mark the KindCluster as ready:

cr.Status.SetConditions(xpv1.Available())

Before returning, it will also set the informations which are transfered into fields of kindCluster which can be retrieved by a

kubectl get, the kubernetesVersion and the internalIP fields:

cr.Status.AtProvider.KubernetesVersion = clusterInfo.K8sVersion

cr.Status.AtProvider.InternalIP = clusterInfo.NodeIp

Now the KindCluster is setup completly and when it’s data is retrieved by kubectl get, all data is available and it’s readiness

is set to true.

The observer loops continies to be called to enable drift detection. That detection is currently not implemented, but is

prepared for future implementations. When the observer function would detect that the kind cluster with a given name is set

up with a kind config other then the desired, the controller would call the controller Update function, which would

delete the currently runnign kind cluster and recreates it with the desired kind config.

When the user is deleting the KindCluster manifest at a later stage, the Delete function of the controller is triggered

to call the kindserver SDK to delete the cluster with the given name. The observer loop will acknowledge that the cluster

is deleted successfully by retrieving kind cluster not found when the deletion had been successfull. If not, the controller

will trigger the delete function in a loop as well, until the kind cluster has been deleted.

That assembles the reconciler loop.

Each newly created kind cluster has a practially random kubernetes API server endpoint. As the IP address of a new kind cluster can’t determined before creation, the kindserver manages the API server field of the kind config. It will map all kind server kubernets API endpoints on it’s own IP address, but on different ports. That garantees that alls kind clusters can access the kubernetes API endpoints of all other kind clusters by using the docker host IP of the kindserver itself. This is needed as the kube config hardcodes the kubernets API server endpoint. By using the docker host IP but with different ports, every usage of a kube config from one kind cluster to another is working successfully.

The management of the kind config in the kindserver is implemented in the Post function of the kindserver main.go file.

The official way for creating crossplane providers is to use the provider-template. Process the following steps to create a new provider.

First, clone the provider-template. The commit ID when this howto has been written is 2e0b022c22eb50a8f32de2e09e832f17161d7596. Rename the new folder after cloning.

git clone https://github.com/crossplane/provider-template.git

mv provider-template provider-kind

cd provider-kind/

The informations in the provided README.md are incomplete. Folow this steps to get it running:

Please use bash for the next commands (

${type,,}e.g. is not a mistake)

make submodules

export provider_name=Kind # Camel case, e.g. GitHub

make provider.prepare provider=${provider_name}

export group=container # lower case e.g. core, cache, database, storage, etc.

export type=KindCluster # Camel casee.g. Bucket, Database, CacheCluster, etc.

make provider.addtype provider=${provider_name} group=${group} kind=${type}

sed -i "s/sample/${group}/g" apis/${provider_name,,}.go

sed -i "s/mytype/${type,,}/g" internal/controller/${provider_name,,}.go

Patch the Makefile:

dev: $(KIND) $(KUBECTL)

@$(INFO) Creating kind cluster

+ @$(KIND) delete cluster --name=$(PROJECT_NAME)-dev

@$(KIND) create cluster --name=$(PROJECT_NAME)-dev

@$(KUBECTL) cluster-info --context kind-$(PROJECT_NAME)-dev

- @$(INFO) Installing Crossplane CRDs

- @$(KUBECTL) apply --server-side -k https://github.com/crossplane/crossplane//cluster?ref=master

+ @$(INFO) Installing Crossplane

+ @helm install crossplane --namespace crossplane-system --create-namespace crossplane-stable/crossplane --wait

@$(INFO) Installing Provider Template CRDs

@$(KUBECTL) apply -R -f package/crds

@$(INFO) Starting Provider Template controllers

Generate, build and execute the new provider-kind:

make generate

make build

make dev

Now it’s time to add the required fields (internalIP, endpoint, etc.) to the spec fields in go api sources found in:

The file apis/kind.go may also be modified. The word sample can be replaces with container in our case.

When that’s done, the yaml specifications needs to be modified to also include the required fields (internalIP, endpoint, etc.)

Next, a kindserver SDK can be implemented. That is a helper class which encapsulates the get, create and delete HTTP calls to the kindserver. Connection infos (kindserver IP address and password) will be stored by the constructor.

After that we can add the usage of the kindclient sdk in kindcluster controller internal/controller/kindcluster/kindcluster.go.

Finally we can update the Makefile to better handle the primary kind cluster creation and adding of a cluster role binding

so that crossplane can access the KindCluster objects. Examples and updating the README.md will finish the development.

All this steps are documented in: https://forgejo.edf-bootstrap.cx.fg1.ffm.osc.live/DevFW/provider-kind/pulls/1

Every provider-kind release needs to be tagged first in the git repository:

git tag v0.1.0

git push origin v0.1.0

Next, make sure you have docker logged in into the target registry:

docker login forgejo.edf-bootstrap.cx.fg1.ffm.osc.live

Now it’s time to specify the target registry, build the provider-kind for ARM64 and AMD64 CPU architectures and publish it to the target registry:

XPKG_REG_ORGS_NO_PROMOTE="" XPKG_REG_ORGS="forgejo.edf-bootstrap.cx.fg1.ffm.osc.live/richardrobertreitz" make build.all publish BRANCH_NAME=main

The parameter BRANCH_NAME=main is needed when the tagging and publishing happens from another branch. The version of the provider-kind that of the tag name. The output of the make call ends then like this: